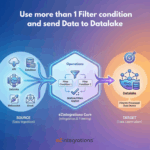

How to Ingest Data into a Datalake Using Multiple Filter Conditions

$0.00

| Workflow Name: |

With Target as Datalake but More Than 1 Filter Condition |

|---|---|

| Purpose: |

Ingest filtered records into Datalake |

| Benefit: |

Structured data in Datalake |

| Who Uses It: |

Data Teams; Analytics |

| System Type: |

Data Integration Workflow |

| On-Premise Supported: |

Yes |

| Industry: |

Analytics / Data Engineering |

| Outcome: |

Filtered records ingested into Datalake |

Table of Contents

Description

| Problem Before: |

Manual ingestion from multiple sources |

|---|---|

| Solution Overview: |

Automated ingestion using multiple filter conditions |

| Key Features: |

Filter; validate; ingest; schedule |

| Business Impact: |

Faster; accurate data availability |

| Productivity Gain: |

Removes manual ingestion |

| Cost Savings: |

Reduces labor and errors |

| Security & Compliance: |

Secure connection |

With Target as Datalake but More Than 1 Filter Condition

The Datalake Multi Filter Workflow ingests records after applying multiple filter conditions, ensuring only high-quality and relevant data is loaded into the Datalake. This supports reliable analytics and reporting.

Advanced Filtering for High-Quality Datalake Ingestion

The system applies multiple predefined filters to incoming data, validates the results, and loads the refined records into the Datalake in near real time. This workflow helps data and analytics teams improve data quality, reduce noise, and maintain well-structured datasets.

Watch Demo

| Video Title: |

API to API integration using 2 filter operations |

|---|---|

| Duration: |

6:51 |

Outcome & Benefits

| Time Savings: |

Removes manual ingestion |

|---|---|

| Cost Reduction: |

Lower operational overhead |

| Accuracy: |

High via validation |

| Productivity: |

Faster ingestion |

Industry & Function

| Function: |

Data Ingestion |

|---|---|

| System Type: |

Data Integration Workflow |

| Industry: |

Analytics / Data Engineering |

Functional Details

| Use Case Type: |

Data Integration |

|---|---|

| Source Object: |

Multiple Source Records |

| Target Object: |

Datalake |

| Scheduling: |

Real-time or batch |

| Primary Users: |

Data Engineers; Analysts |

| KPI Improved: |

Data availability; ingestion speed |

| AI/ML Step: |

Not required |

| Scalability Tier: |

Enterprise |

Technical Details

| Source Type: |

API / Database / Email |

|---|---|

| Source Name: |

Multiple Sources |

| API Endpoint URL: |

– |

| HTTP Method: |

– |

| Auth Type: |

– |

| Rate Limit: |

– |

| Pagination: |

– |

| Schema/Objects: |

Filtered records |

| Transformation Ops: |

Filter; validate; normalize |

| Error Handling: |

Log and retry failed ingestion |

| Orchestration Trigger: |

On upload or scheduled |

| Batch Size: |

Configurable |

| Parallelism: |

Multi-source concurrent |

| Target Type: |

Datalake |

| Target Name: |

Datalake |

| Target Method: |

API / Batch Upload |

| Ack Handling: |

Logging |

| Throughput: |

High-volume records |

| Latency: |

Seconds/minutes |

| Logging/Monitoring: |

ingestion logs |

Connectivity & Deployment

| On-Premise Supported: |

Yes |

|---|---|

| Supported Protocols: |

API; DB; Email |

| Security & Compliance: |

Secure connection |

FAQ

1. What is the 'With Target as Datalake but More Than 1 Filter Condition' workflow?

It is a data integration workflow that ingests records into a Datalake after applying multiple filter conditions to ensure only relevant data is processed.

2. How do multiple filter conditions work in this workflow?

The workflow evaluates more than one predefined filter condition on the source data and ingests only records that satisfy all configured conditions.

3. What types of data sources are supported?

The workflow supports data ingestion from APIs, databases, and files, applying multiple filters consistently across supported sources.

4. How frequently can the workflow run?

The workflow can run on a scheduled basis or in near real-time depending on data freshness and analytics requirements.

5. What happens to records that do not meet the filter conditions?

Records that do not satisfy one or more filter conditions are excluded and are not ingested into the Datalake.

6. Who typically uses this workflow?

Data teams and analytics teams use this workflow to ensure high-quality, structured data ingestion into the Datalake.

7. Is on-premise deployment supported?

Yes, this workflow supports on-premise data sources and hybrid integration environments.

8. What are the key benefits of this workflow?

It improves data quality, ensures structured and relevant data in the Datalake, reduces noise, and supports efficient analytics and reporting.

Resources

Case Study

| Customer Name: |

Data Team |

|---|---|

| Problem: |

Manual ingestion from multiple sources |

| Solution: |

Automated filtered ingestion |

| ROI: |

Faster workflows; reduced errors |

| Industry: |

Analytics / Data Engineering |

| Outcome: |

Filtered records ingested into Datalake |