What Are Enterprise AI Agents? Complete Guide for Technology Leaders in 2026

March 16, 2026Enterprise AI agents are software systems that autonomously execute multi-step tasks across your enterprise applications without constant human instruction. They perceive data, use tools like APIs and databases, make decisions, and take actions to complete a goal. In 2026, they are used for customer service, finance automation, supply chain management, HR operations, and compliance monitoring.

TL;DR

Enterprise AI agents are software systems that autonomously perceive data, reason across multiple steps, use tools (APIs, databases, search), and take actions to complete a defined goal without constant human instruction. Gartner predicts 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from less than 5% in 2025. The AI agents market reached $7.8 billion in 2025 and is projected to exceed $10.9 billion in 2026. AI agents differ from AI assistants and chatbots: they act autonomously, complete multi-step tasks, use tools, and adapt their approach based on intermediate results. Over 40% of enterprise AI agent projects fail due to governance gaps, unclear ROI, and inadequate integration infrastructure. Tool access and observability are the primary success factors. Goldfinch AI of eZintegrations delivers multi-agent orchestration with 9 native out-of-the-box agent tools and a 5,000+ endpoint API catalog for system actions, all in one governed platform.

Introduction

The phrase “enterprise AI agents” appears in every vendor briefing, analyst report, and technology conference keynote in 2026. Most of those appearances contain very little precision about what an agent actually is, how it differs from the automation your organisation already runs, and what determines whether an agent deployment succeeds or fails.

That vagueness is expensive. Gartner warns that over 40% of agentic AI projects are at risk of cancellation by 2027 if governance, observability, and ROI clarity are not established early. The organisations at greatest risk are those that adopt the language of AI agents without understanding the architecture behind them.

This guide gives you the technical substance. What an enterprise AI agent is, architecturally. How it differs from chatbots, RPA bots, and AI assistants. The four-level autonomy framework that determines what your agents can actually do. The six highest-ROI enterprise use cases. The five implementation decisions that separate successful deployments from failed ones. And how eZintegrations delivers the complete platform infrastructure for enterprise AI agents at production scale.

What Is an Enterprise AI Agent?

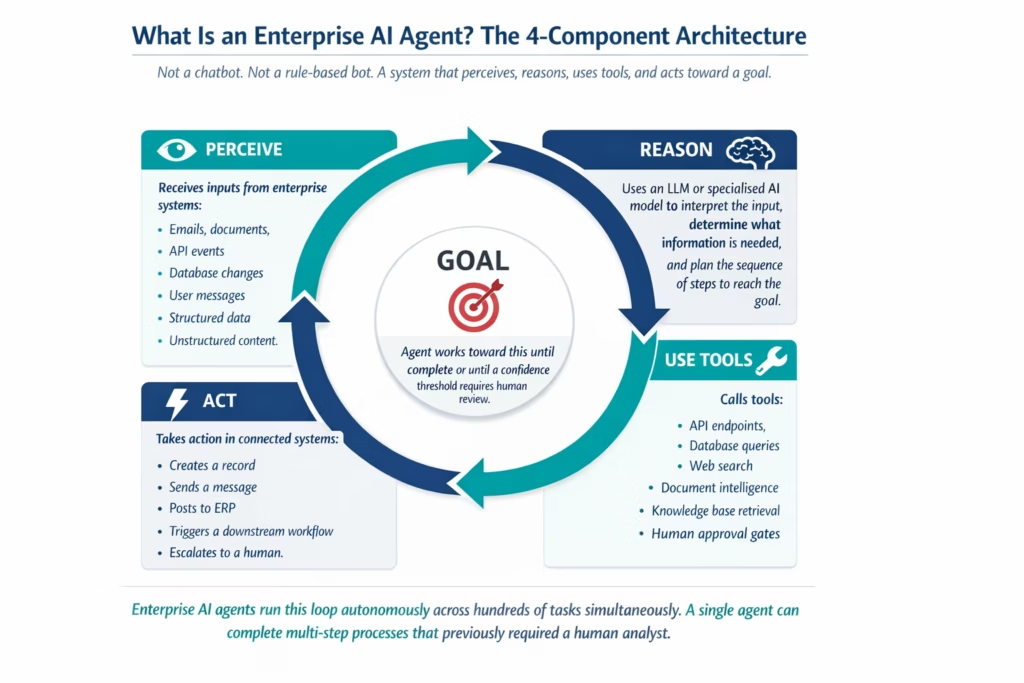

An enterprise AI agent is a software system that autonomously perceives inputs from your enterprise data and applications, reasons across multiple steps using a large language model or specialised AI model, uses tools to retrieve information or take actions, and completes a defined goal without requiring human instruction at each step.

The global AI agents market reached $7.8 billion in 2025 and is projected to exceed $10.9 billion in 2026, growing at over 45% CAGR, according to Grand View Research data. Gartner projects that by 2028, 33% of enterprise software applications will include agentic AI, representing a 33-fold increase from less than 1% in 2024.

How AI Agents Differ from Chatbots, RPA Bots, and AI Assistants

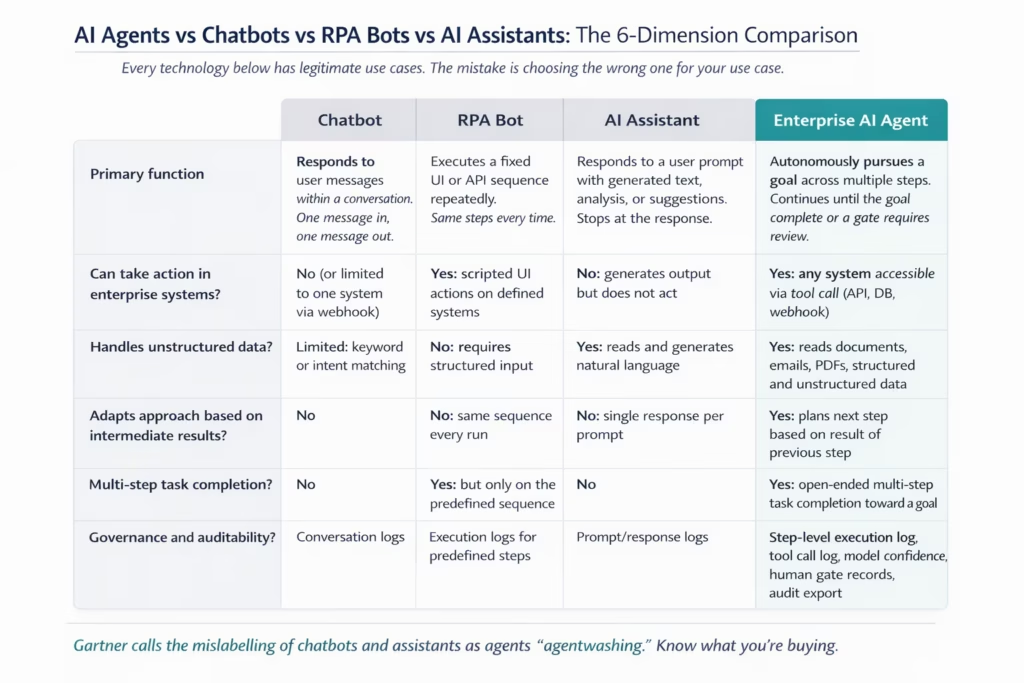

Enterprise AI agents are not chatbots, RPA bots, or AI assistants. Each of these four categories has a distinct architecture, a distinct set of capabilities, and a distinct failure mode. Conflating them is one of the primary causes of failed AI agent projects.

Gartner specifically names “agentwashing” as a market problem: vendors labelling AI assistants or chatbots as agents to capture the attention around agentic AI. Understanding the distinctions protects your organisation from buying the wrong technology for the use case.

Chatbots respond to user messages within a defined conversation flow. They’re designed for natural language interaction within a single session. They don’t take actions in external systems (beyond simple integrations like submitting a form), they don’t adapt their approach based on intermediate results, and they don’t complete multi-step goals autonomously. A chatbot that tells you your order status is not an agent: it retrieved one data point in response to one message.

RPA bots (Robotic Process Automation) execute predefined sequences of UI or API actions on structured data. They are excellent for high-volume, zero-variation, fully structured processes. They don’t handle unstructured data, they don’t adapt when inputs vary, and they fail on any case that falls outside the predefined script. RPA is rule-based automation, not agentic AI.

AI assistants respond to prompts with generated text, analysis, code, or suggestions. They read documents, answer questions, and draft content. But they stop at the response. They don’t take actions in your systems, they don’t pursue goals across multiple steps, and they don’t iterate based on what they find. An AI assistant that summarises a contract is not an agent: it generated text in response to a prompt.

Enterprise AI agents pursue goals autonomously. They perceive inputs, reason about what tools to use next, call those tools (an API endpoint, a database query, a document intelligence step, a web search), observe the result, and determine the next step based on what they found. This loop continues until the goal is complete or a confidence threshold requires human review. That architectural difference is what separates agents from the other three categories.

The 4-Level AI Agent Autonomy Framework

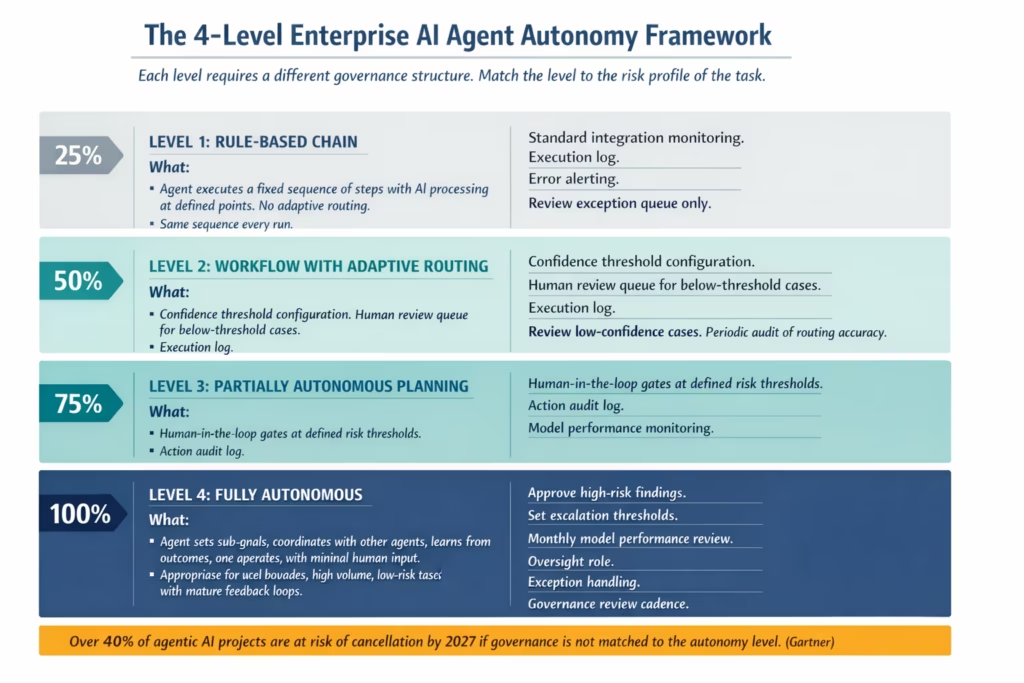

Enterprise AI agents operate at different levels of autonomy, and the level your organisation deploys determines what supervision structure your governance team needs to build around each agent.

By 2028, at least 15% of day-to-day work decisions will be made autonomously by AI agents, up from virtually zero in 2024, according to Gartner. That trajectory is clear, but it doesn’t mean every enterprise agent should operate at maximum autonomy immediately. The four-level framework below maps the autonomy spectrum to the governance requirements and use cases appropriate for each level.

What Tools Do Enterprise AI Agents Use?

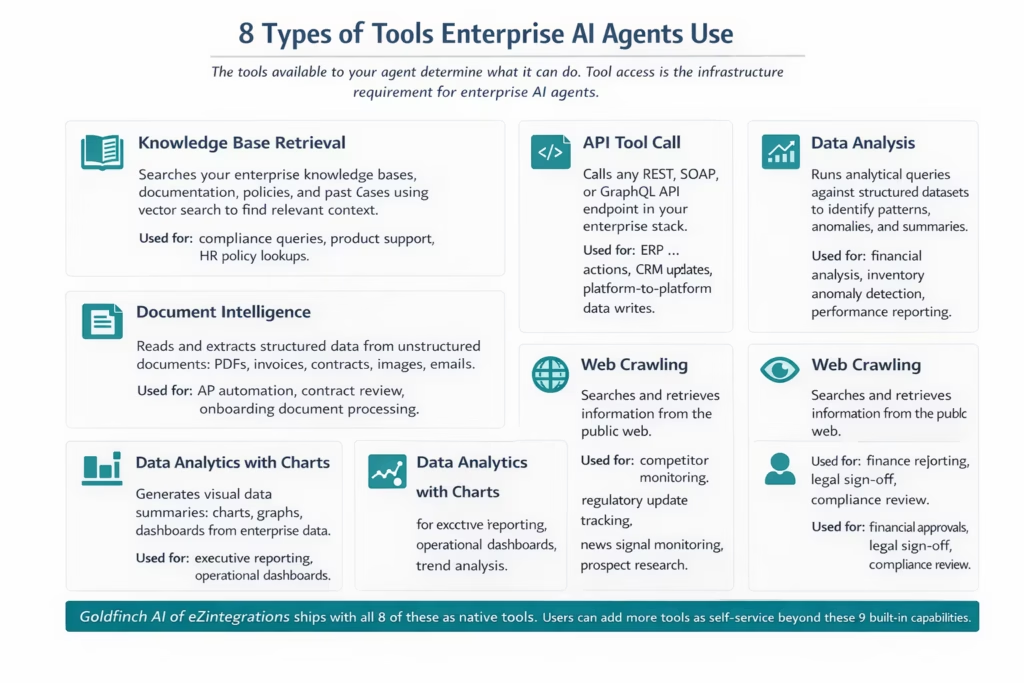

Enterprise AI agents reason about which tools to use and call them autonomously to complete each step of a goal. The tool set available to an agent is the primary determinant of what tasks the agent can complete and how reliably it can complete them.

93% of IT leaders report intentions to introduce autonomous agents within the next two years, according to MuleSoft and Deloitte Digital’s 2025 Connectivity Benchmark report. The most critical success factor those same leaders identify: whether the agent has access to the systems and data it needs to complete real enterprise tasks. Tool access is not a minor implementation detail. It is the core infrastructure requirement.

The 6 Highest-ROI Enterprise AI Agent Use Cases in 2026

The six enterprise AI agent use cases with the highest documented ROI share a common characteristic: they involve tasks that combine data retrieval from multiple systems, judgment across variable inputs, and action in enterprise software, which is the specific task profile where human analysts are most costly and AI agents are most effective.

ServiceNow documented 80% autonomous handling of customer support inquiries and a 52% reduction in time needed for complex case resolution, generating $325 million in annualised value, according to Datagrid’s analysis of Forrester data. That’s one organisation. The pattern holds across the six use cases below.

Customer service resolution is the most widely deployed enterprise AI agent use case because the ROI proof points are the clearest and the task profile maps perfectly to agent strengths: variable natural language inputs, multi-system data retrieval (order database, knowledge base, customer history), probabilistic routing decisions, and action (drafting a response or escalating to a human). The 80% autonomous resolution rate documented in the ServiceNow case is a benchmark that many organisations report approaching within 6-12 months of mature agent deployment.

AP invoice processing is the highest-frequency finance use case. Invoices arrive in dozens of formats from hundreds of vendors. A rule-based system requires a format template for each vendor. An AI agent with document intelligence reads any invoice, validates it against your vendor master and purchase order database, posts approved invoices to your ERP, and routes exceptions below a confidence threshold to a human reviewer. The exception rate for a trained AP agent typically falls below 5%.

Contract review and risk analysis is the highest-complexity legal use case. The agent reads the contract, searches applicable regulations and your internal policy database, identifies clauses that deviate from your standard terms, flags missing required provisions, and produces a structured risk summary with clause-level citations. Legal teams that previously spent 3-4 hours on a standard contract first-pass review now spend 20-30 minutes reviewing the agent’s structured summary.

HR onboarding orchestration is a multi-system coordination use case. The agent reads new hire records from your HRIS, classifies the role type and required provisioning, triggers downstream workflows (Active Directory provisioning, SaaS app access, training path assignment, compliance certification), monitors completion status across all triggered workflows, follows up on delays, and produces a day-one readiness confirmation. The agent coordinates actions across 5-10 systems that previously required manual coordination from an HR team.

Supply chain monitoring agents watch inventory levels, supplier delivery signals, and demand forecast data simultaneously, identify risk patterns before they become shortages or delays, generate structured alerts with recommended actions, and coordinate with procurement agents when threshold events require a response. The documented outcome from Bradesco’s AI agent deployment across similar use cases: 17% of employee capacity freed and 22% reduction in lead times.

Sales intelligence research agents retrieve company information from multiple sources (company website, news feeds, public filings, intent data platforms), analyse firmographic fit against your ideal customer profile criteria, identify recent buying signals, and produce a structured account brief that your sales rep can review in 5 minutes rather than spend 45 minutes building manually.

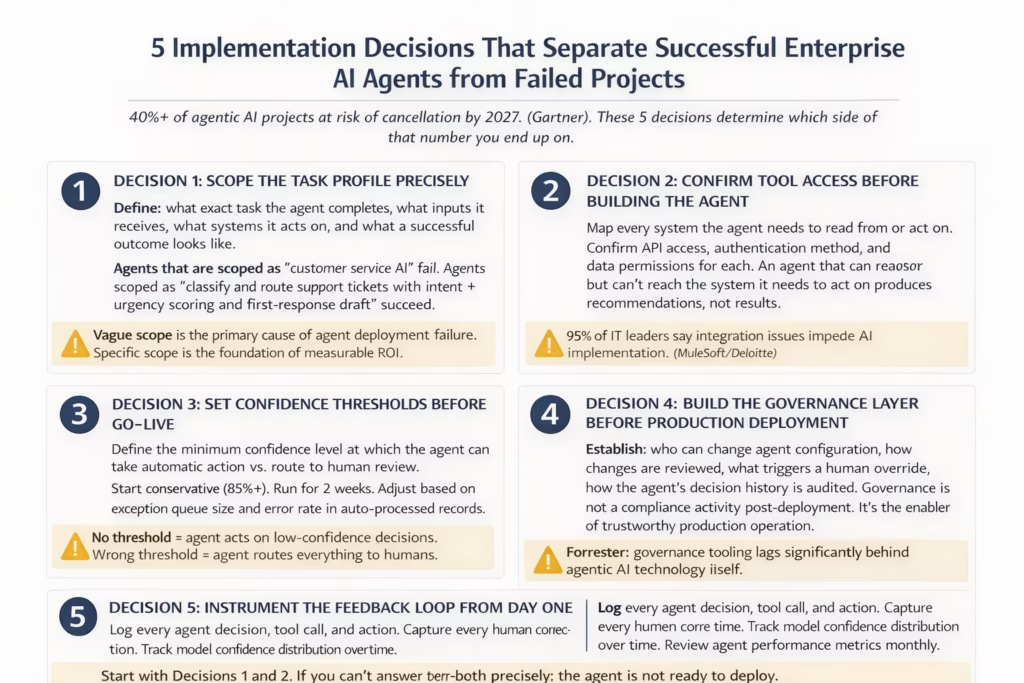

The 5 Implementation Decisions That Determine Success or Failure

Over 40% of enterprise AI agent projects are at risk of cancellation by 2027 if governance, observability, and ROI clarity are not established, according to Gartner. The five implementation decisions below are the specific choices that distinguish the 60% of projects that succeed from those at cancellation risk.

Only 34% of organisations successfully implement agentic AI systems despite high investment levels, according to Digital Commerce 360 data. The gap between investment intent (96% plan to expand AI agent usage) and successful implementation is almost entirely explained by these five decisions.

Decision 1: Scope precisely. The most common agent deployment failure is attempting to solve a broadly defined problem. “Improve customer service with AI” is not an agent scope. “Classify inbound support tickets by intent and urgency, route to the correct team queue, and generate a first-response draft for agent review” is an agent scope. The more precisely you define the task profile before you build the agent, the more directly you can measure success and identify where the agent needs improvement.

Decision 2: Confirm tool access before building. This decision is so commonly missed that 95% of IT leaders explicitly name integration issues as the barrier to AI implementation. Before you configure an agent, walk through every system it needs to read or act on and confirm: Is the API accessible? What authentication method is required? What data fields are available in the API response? What permissions does the agent service account need? Discovering a missing API integration after the agent is built adds weeks to deployment.

Decision 3: Set confidence thresholds before go-live. Every agent action should have a defined minimum confidence level at which it proceeds automatically versus routes to human review. Start conservative. After two weeks, review what the human reviewers are finding: if they’re approving 95% of routed items without changes, your threshold is too high. If auto-processed items are producing errors, your threshold is too low. Adjust based on actual production data.

Decision 4: Build the governance layer before production deployment. This means: who has authority to change the agent’s configuration, what review process governs those changes, what conditions trigger a human override of an agent decision, and how the agent’s decision history is logged and auditable. Forrester specifically notes that “governance tooling lags significantly behind agentic AI technology itself.” Build your governance structure proactively, not reactively after an incident.

Decision 5: Instrument the feedback loop from day one. Log every agent decision, every tool call, and every action the agent takes. Capture every instance where a human reviewer corrects an agent output. Track the model’s confidence score distribution over time. Schedule monthly reviews of agent performance metrics. Agents without feedback loops experience model drift: the model’s accuracy on production data gradually diverges from its training performance. The drift is silent until a large error surfaces in production.

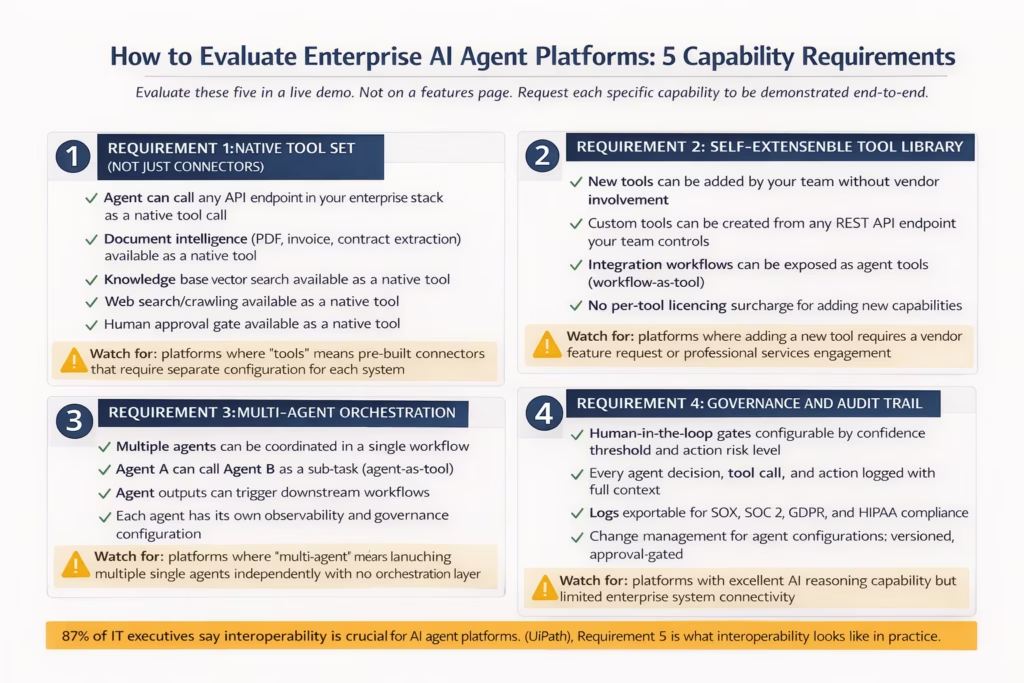

How to Evaluate Enterprise AI Agent Platforms

Enterprise AI agent platform selection comes down to five capability requirements. Platforms that meet all five are production-ready for enterprise deployment. Platforms that meet three or fewer are appropriate for pilots but will create operational problems at scale.

87% of IT executives say interoperability is “very important or crucial” for AI agent platforms, according to UiPath research. That figure captures one of the five requirements. The full set is broader.

The most important distinction in enterprise AI agent platform evaluation is between AI reasoning capability and system connectivity. Most platforms entering the market in 2026 have capable AI reasoning layers: they can plan multi-step tasks, call tools, and interpret results. The differentiating factor is whether those reasoning capabilities connect to the actual systems in your enterprise stack, and whether your team can add new system connections without vendor involvement.

An agent platform with excellent reasoning and limited connectivity produces pilots. An agent platform with broad connectivity, governance controls, a self-extensible tool library, and multi-agent orchestration produces production automation that can scale across your operations.

How eZintegrations Delivers Enterprise AI Agent Orchestration

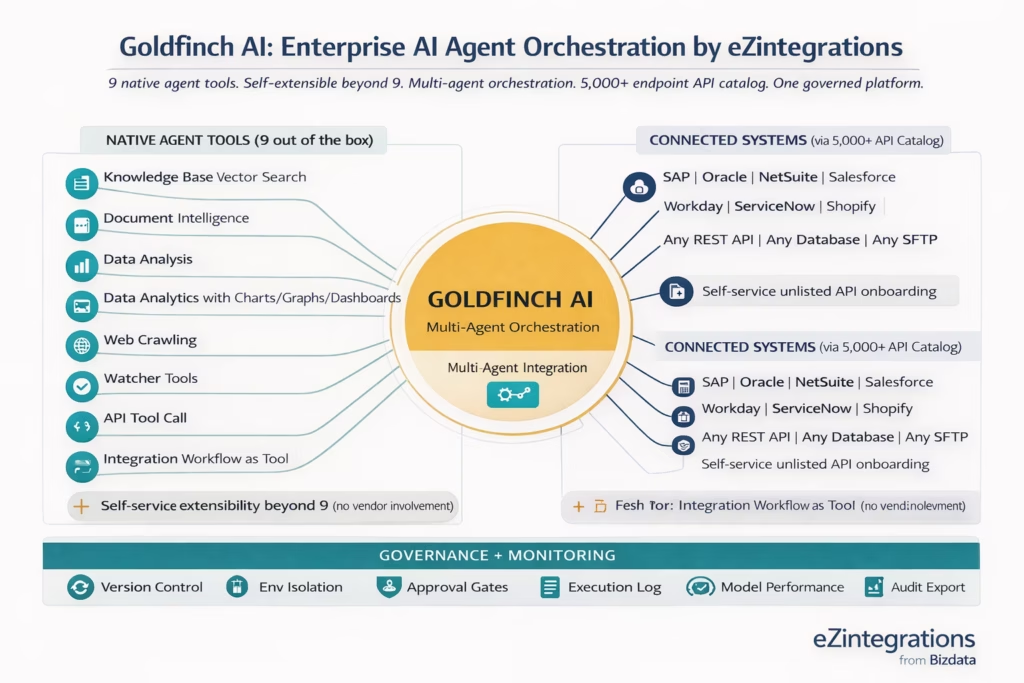

eZintegrations delivers enterprise AI agent orchestration through Goldfinch AI, a multi-agent platform with 9 native out-of-the-box agent tools, a self-extensible tool library, and a 5,000+ endpoint API catalog for system connectivity, all governed by version-controlled workflow management and unified execution monitoring.

The 9 native tools available to every Goldfinch AI agent are: Knowledge Base Vector Search, Document Intelligence, Data Analysis, Data Analytics with Charts/Graphs/Dashboards, Web Crawling, Watcher Tools, API Tool Call, Integration Workflow as Tool, and Integration Flow as MCP. These cover the full tool set required for the six enterprise use cases described in Section 5. An AP invoice agent needs Document Intelligence and API Tool Call. A contract review agent needs Document Intelligence, Knowledge Base Vector Search, and the Web Crawling tool for regulatory lookups. A supply chain monitoring agent needs Watcher Tools, Data Analysis, and API Tool Call. All of these are available natively, with no additional connector configuration.

Self-service tool extensibility means your team can add tools beyond the 9 native ones without submitting a vendor support ticket, waiting for a feature release, or writing connector code. Any REST API your team controls can be exposed as an agent tool. Any integration workflow in eZintegrations can be exposed as a tool that an agent can call. This means your existing integration workflows become agent capabilities immediately, without rebuilding them as agent-specific tools.

The 5,000+ endpoint API catalog is what connects Goldfinch AI agents to your enterprise systems. When an agent uses the API Tool Call tool, it’s drawing from the same catalog that your integration workflows use. SAP S/4HANA endpoints, Oracle ERP Cloud endpoints, Salesforce REST APIs, Workday APIs, ServiceNow REST, and thousands more are pre-catalogued. If a system your agent needs to reach isn’t in the catalog, your team adds it as a self-service action.

Unified governance means every agent action, tool call, and decision is logged in the same execution monitoring system as your integration workflows. The same execution log. The same alert system. The same version control. The same environment isolation (Dev/Test/Staging/Production with approval-gated promotions). The same audit trail export for SOX, SOC 2, and GDPR compliance.

To explore the broader AI workflow automation context in which Goldfinch AI agents operate, see our enterprise AI workflow automation guide.

Frequently Asked Questions

1. What is an enterprise AI agent?

An enterprise AI agent is a software system that autonomously perceives enterprise data reasons using an AI model uses tools such as API calls document reading database queries and web search to retrieve information or take actions and completes a multi step task without requiring human instruction at each step. It operates within governance controls including audit trails confidence thresholds and human approval gates.

2. How are enterprise AI agents different from chatbots?

Chatbots respond to messages within a conversation and stop after generating a reply. Enterprise AI agents pursue goals autonomously across multiple steps use tools to interact with real enterprise systems such as ERP CRM and databases and adapt their approach based on intermediate results. A chatbot tells you the order status while an AI agent retrieves the order identifies the delay cause contacts the supplier through API updates the ERP record and notifies the customer.

3. What tools do enterprise AI agents need?

Enterprise AI agents require document intelligence for PDF and invoice processing knowledge base retrieval for policy and regulation lookup API tool call access to act on ERP CRM and enterprise systems structured data analysis capability web crawling for external information retrieval watcher tools for condition monitoring and human approval gates for governance of high risk actions. Goldfinch AI in eZintegrations includes these as native tools.

4. Why do over 40 percent of enterprise AI agent projects fail?

Research from Gartner highlights four primary causes. Governance gaps where no oversight structure exists for agent decisions unclear ROI where agents are deployed without measurable success criteria inadequate integration infrastructure where agents can reason but cannot access the systems required to act and model drift where there is no feedback loop to detect declining accuracy over time. These failures are preventable with proper architecture and governance.

5. How long does it take to deploy an enterprise AI agent?

With a platform that provides pre built agent tools and a large API integration catalog a Level 2 enterprise AI agent such as support ticket routing or invoice processing can reach production within two to four weeks from initial scoping. A Level 3 agent such as contract review or supply chain monitoring typically requires four to eight weeks depending on API access readiness and the number of iterations required to calibrate confidence thresholds.

6. What is Goldfinch AI?

Goldfinch AI is the multi agent orchestration platform within eZintegrations developed by Bizdata. It includes nine native agent tools including Knowledge Base Vector Search Document Intelligence Data Analysis Analytics with Charts Graphs and Dashboards Web Crawling Watcher Tools API Tool Call Integration Workflow as Tool and Integration Flow as MCP. Additional tools can be added by users and the platform connects to enterprise systems through the eZintegrations catalog of more than five thousand API endpoints.

7. How does multi agent orchestration differ from a single AI agent?

A single AI agent works toward one objective using its available tools. Multi agent orchestration coordinates multiple specialized agents where one agent can call another as a sub task each agent has its own tools and governance configuration and the system collectively completes complex tasks. For example a procurement orchestration may use a research agent a risk assessment agent and a supplier communication agent working together in a coordinated workflow.

Conclusion

The trajectory is clear. Gartner projects 40% of enterprise applications will include task-specific AI agents by end of 2026, up from less than 5% in 2025. The AI agents market hit $7.8 billion in 2025 and is heading to $10.9 billion in 2026 and $52 billion by 2030. Enterprise organisations that wait are not pausing: they’re falling behind.

But the 40% of projects at risk of cancellation by 2027 demonstrate that the technology alone isn’t sufficient. The organisations that succeed are those that match their agent autonomy level to their governance infrastructure, confirm tool access to all required systems before building, set confidence thresholds that make automatic action trustworthy, and instrument the feedback loop from the first production run.

Goldfinch AI of eZintegrations is built to give your organisation all of the above. Nine native agent tools that cover the complete enterprise use case spectrum. A self-extensible tool library that grows with your requirements without vendor dependency. A 5,000+ endpoint API catalog that connects agents to every system in your stack. And unified governance that applies the same version control, environment isolation, and audit trail to agent workflows as to every other automation your team operates.

Your enterprise AI agent programme doesn’t need to start with a 6-month platform evaluation and a 12-month build cycle.

Book a free demo to see Goldfinch AI in action and walk through a live enterprise agent deployment from tool configuration to production run in one session.

Explore Goldfinch AI multi-agent orchestration. Explore AI Workflows for the LLM-powered pipeline layer that AI agents connect to. Visit the Automation Hub for 1,000+ pre-built templates including AI agent starters.