AI-Native iPaaS vs AI-Bolted-On: Why the Difference Defines Your Automation Future

March 16, 2026AI-native iPaaS platforms build AI as a first-class component of the workflow engine: every step can use LLMs, agents, or document intelligence natively. AI-bolted-on platforms add AI connectors, copilots, or plugins on top of an existing integration architecture. The difference determines whether AI augments your automation or just sits alongside it.

TL;DR

MuleSoft, Boomi, and Oracle OIC were built as integration-first platforms. Their AI capabilities were added via acquisitions, connectors, and copilot layers on top of existing architecture. AI-native iPaaS platforms build LLMs, agents, and document intelligence as first-class workflow steps, not add-ons that require separate licences or developer expertise. The practical difference shows up in four areas: implementation time for AI workflows, the skills required to build them, what happens when AI steps fail, and how AI output connects to system actions. eZintegrations was built AI-native from the ground up: AI Workflows, Goldfinch AI multi-agent orchestration with 9 native extensible tools, and a 5,000+ endpoint API catalog as one platform. If your automation roadmap includes intelligent document processing, agentic workflows, or LLM-powered routing, the architecture your iPaaS was built on determines whether you can execute that roadmap.

Introduction

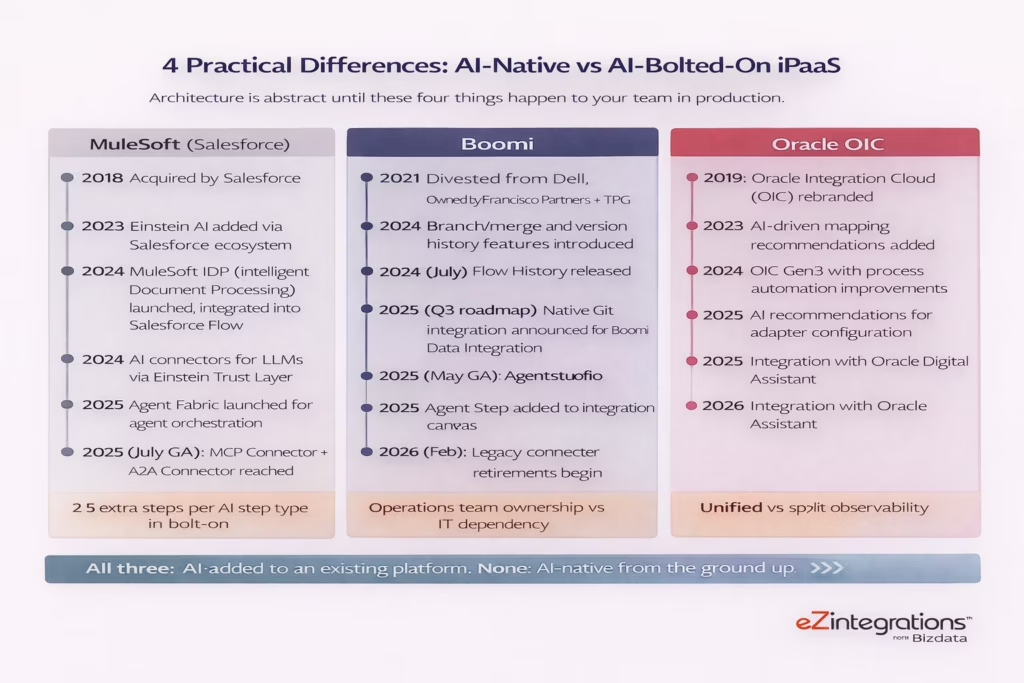

In 2026, every major iPaaS vendor has an AI story. MuleSoft has Agent Fabric and Einstein connectors. Boomi has Agentstudio, which reached general availability in May 2025. Oracle OIC has AI-driven recommendations. They all have blog posts, product pages, and keynote demos showing AI in action.

But the question is not whether a platform has AI features. The question is how those features were built into the platform and what that architecture means for your team when you try to use them in production.

There is a meaningful difference between a platform that was designed from the start to treat AI as a first-class element of every workflow, and a platform that was designed for API-led integration and later added AI capabilities through acquisitions, connector libraries, and external service dependencies. That difference shows up concretely in implementation time, skills requirements, failure handling, and the total cost of building AI-powered workflows at enterprise scale.

This article examines both architectures with specifics, not marketing claims.

What Does “AI-Native iPaaS” Actually Mean?

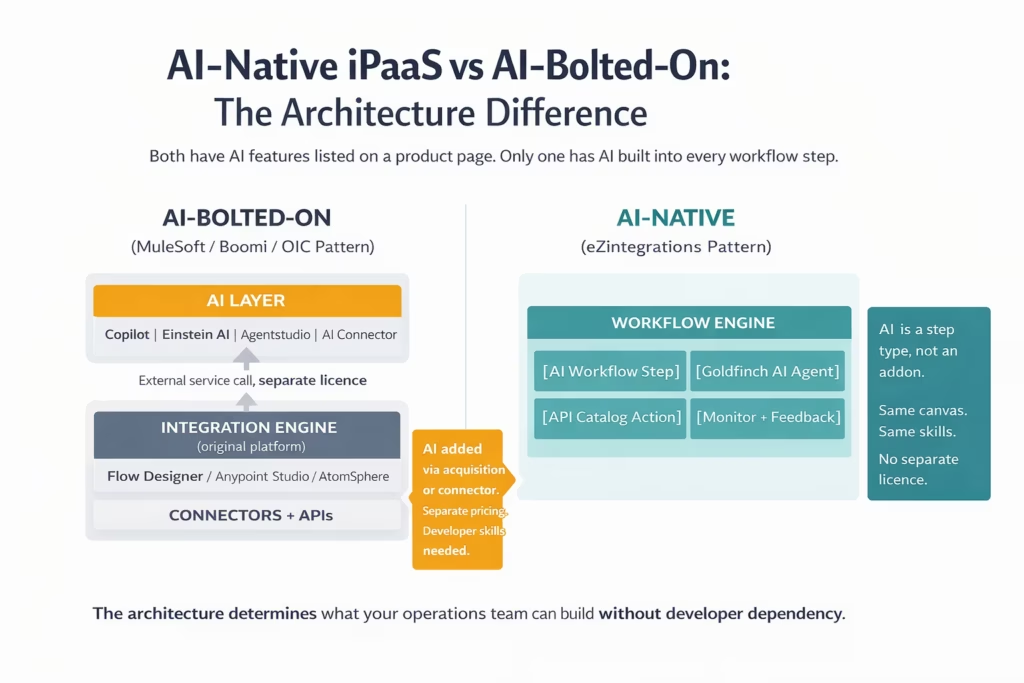

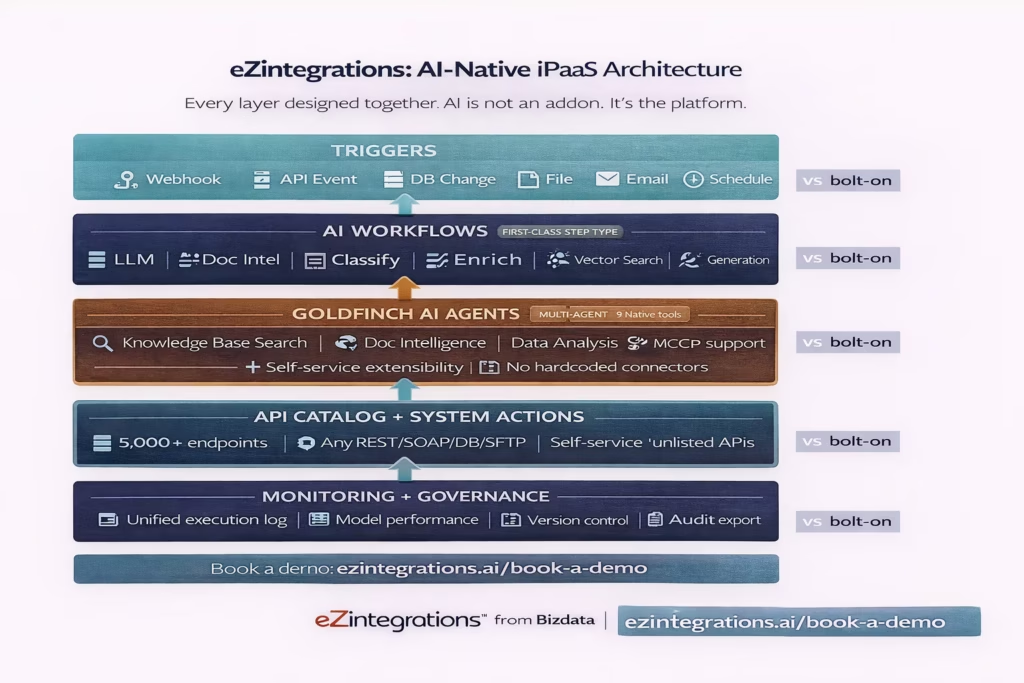

An AI-native iPaaS is one where AI model calls, agent orchestration, document intelligence, and LLM-powered processing are first-class step types in the workflow engine, not external connectors, plugins, or platform add-ons that require separate configuration, separate licensing, or developer-level skills to use.

The workflow automation market hit $21.17 billion in 2025 and is growing at a 14.3% CAGR. The acceleration in that growth rate is almost entirely driven by AI. But 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025. That growth requires a platform architecture designed to deliver it.

An AI-native platform treats an LLM call the same way it treats an API call: as a configurable step in a workflow canvas that your operations team can place, configure, and connect to upstream and downstream steps without writing code. The AI step reads data from the previous step, processes it through the model, and passes structured output to the next step. Field mapping, confidence thresholds, error routing, and feedback logging all work the same way for AI steps as for any other step.

An AI-bolted-on platform provides the integration engine and then adds AI through a separate service layer. The AI capabilities may be excellent in isolation, but accessing them from within a workflow requires calling an external service, which typically means a developer configuring the connector, a separate authentication setup, a separate monitoring stack, and often a separate licence for the AI service itself.

The distinction is not just architectural. It’s operational. Your team’s ability to build, modify, and maintain AI workflows without constant developer involvement depends on whether AI is a native step type or an external dependency.

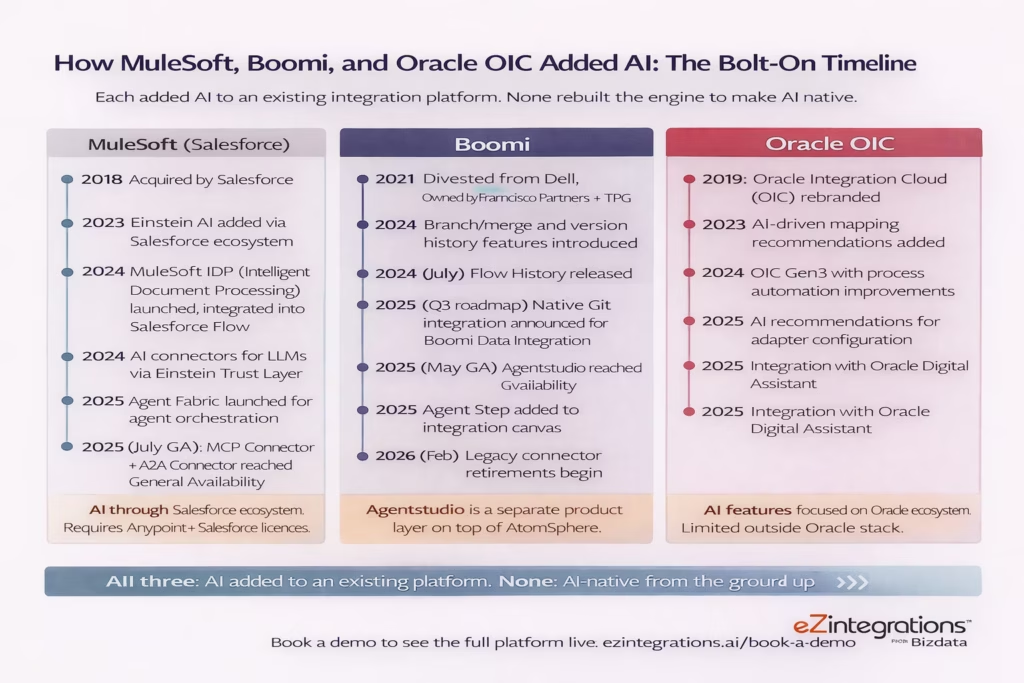

How MuleSoft, Boomi, and Oracle OIC Added AI: The Bolt-On Pattern

MuleSoft, Boomi, and Oracle OIC each added AI capabilities to existing integration architectures through a combination of acquisitions, connector development, and third-party service integrations. The result is AI features that work, but require developer skills and additional configuration layers to use from within an integration workflow.

MuleSoft was acquired by Salesforce in 2018. The platform’s AI capabilities have been built primarily through the Einstein ecosystem: the Einstein Trust Layer, Einstein for Anypoint Code Builder (which helps developers generate DataWeave code and flow configurations), MuleSoft IDP (Intelligent Document Processing, launched as a native Salesforce Flow component), and AI connectors for third-party LLMs routed through Salesforce’s Models API. The MuleSoft Agent Fabric, for orchestrating AI agents, reached a significant milestone in 2025. The MCP Connector and A2A Connector reached General Availability in July 2025.

The key characteristic of MuleSoft’s AI model: AI capabilities are delivered through the Salesforce ecosystem. To use Einstein AI within a MuleSoft integration, you need Anypoint Platform plus a Salesforce licence that includes the relevant Einstein features. MuleSoft’s DataWeave transformation language requires certified developer expertise regardless of how AI-assisted code generation helps. The platform is developer-centric by design.

Boomi added its AI capabilities through a separate product layer called Agentstudio, which reached General Availability in May 2025. Agentstudio lets you design and deploy AI agents, and a new “Agent Step” in the integration canvas lets workflows interact with those agents. Prior to Agentstudio’s GA, Boomi’s AI features were limited to crowdsourced “Boomi Suggest” recommendations for field mappings and integration design suggestions. For capabilities like Intelligent Document Processing, Boomi relies on third-party connectors rather than native platform features (as explicitly noted on MuleSoft’s own comparison page). The relationship between Agentstudio and AtomSphere (Boomi’s core integration engine) is an integration between two products, not a unified native architecture.

Oracle OIC added AI as a set of features layered onto its integration platform: AI-driven adapter recommendations, natural language assistance in process design, and integration with Oracle Digital Assistant. The AI capabilities are strongest when you’re integrating within the Oracle ecosystem (Oracle ERP, HCM, SCM). Outside the Oracle stack, the AI features are less contextually aware and the recommended integrations are less targeted.

None of these platforms are poorly designed. They are each the product of rational evolution: build an excellent integration engine, then add AI as the market demands it. But that evolution path creates a structural reality: AI is an addition to the platform, not the foundation of it.

The 4 Practical Differences Between AI-Native and AI-Bolted-On

The architectural difference between AI-native and AI-bolted-on iPaaS produces four specific, measurable differences in how your team experiences building and maintaining AI workflows: implementation complexity, skills required, failure handling, and cost of AI at scale.

Implementation complexity is the first place the architectural difference shows up. On an AI-bolted-on platform, adding an AI step to a workflow means configuring a connector to an external AI service, setting up authentication to that service separately from your integration platform credentials, mapping your workflow data to the connector’s input format, handling the connector’s output format in your downstream steps, and monitoring two separate systems for failures. On an AI-native platform, you drag an AI step onto the canvas, configure a prompt template that references upstream data, define the output schema, and map it to the next step. Same experience as every other step type.

Skills required is the second difference. On MuleSoft, even with Einstein-assisted code generation, DataWeave remains the data transformation language for complex integrations. DataWeave requires developer expertise. Boomi’s Agentstudio is a competent agent design environment, but it’s a separate product with its own learning curve, separate from the integration canvas skills your team already has. On an AI-native platform, AI step configuration uses the same visual canvas your operations team already knows. There’s no additional skills investment to add AI to a workflow.

Failure handling is the third difference, and it’s the one that matters most in production. When an AI connector fails on a bolt-on platform, the failure could originate from the integration platform, the connector, or the external AI service. Three potential sources. Two monitoring systems. Unclear attribution. On an AI-native platform, every step including AI steps logs to the same execution record. The same error format. The same alert system. The same retry configuration. When an AI step fails, your team debugs it the same way they debug any other failed step.

Cost at scale is the fourth difference. A bolt-on AI platform means paying for the integration platform licence, the AI service licence (Einstein, Agentstudio, OCI AI services), connector configuration time, and developer hours for each new AI workflow. As you build more AI workflows, each one adds marginal cost across all three dimensions. An AI-native platform with per-automation pricing means each additional AI workflow costs the same as any other automation: one automation licence, no additional AI service licence, no additional developer hours for routine AI step configuration.

Summary Comparison Table

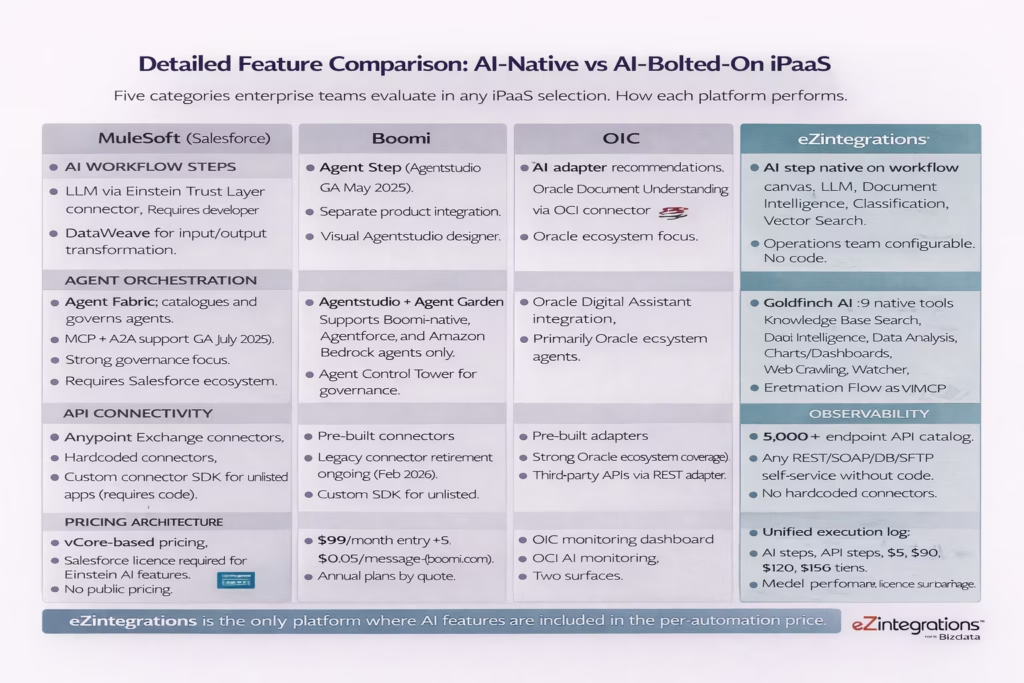

| Capability | MuleSoft | Boomi | Oracle OIC | eZintegrations |

|---|---|---|---|---|

| AI architecture | Bolt-on via Salesforce Einstein ecosystem | Bolt-on via Agentstudio (GA May 2025) | Bolt-on via OCI AI services | AI-native: first-class step type |

| LLM at any workflow step | Via Einstein Trust Layer connector (developer-configured) | Via Agentstudio Agent Step (separate product) | Via OCI AI connectors | Yes, native. No connector. No separate licence. |

| No-code AI step configuration | No: DataWeave + connector configuration required | Partial: Agentstudio has visual designer, separate from integration canvas | No: AI configuration requires technical setup | Yes: same visual canvas as all other steps |

| Document intelligence | Native IDP via Salesforce Flow (not Anypoint canvas) | Third-party connectors required | Oracle Document Understanding via OCI | Native Document Intelligence step |

| Multi-agent orchestration | Agent Fabric (governance focus, MCP/A2A GA July 2025) | Agent Control Tower (Boomi/Agentforce/Amazon Bedrock only) | Oracle Digital Assistant integration | Goldfinch AI: 9 native tools, self-extensible, multi-agent |

| Agentic tool extensibility | MCP server creation for API exposure | Agent Garden (Boomi-native agents only) | Limited: Oracle ecosystem focus | Self-service tool addition beyond 9 native tools. No vendor involvement. |

| API catalog breadth | Anypoint Exchange connectors (hardcoded) | Pre-built connectors (listed apps only) | Pre-built adapters (Oracle ecosystem focus) | 5,000+ endpoints. Any unlisted API or DB as self-service. |

| Monitoring: AI step observability | Separate Einstein monitoring + Anypoint monitoring | Separate Agentstudio + AtomSphere monitoring | Separate OCI AI + OIC monitoring | Unified: AI steps in same execution log as all steps |

| Skills required for AI workflows | MuleSoft certified developer + Einstein specialist | Boomi architect + Agentstudio designer | Oracle integration developer | Operations team using existing no-code canvas |

| Pricing model | vCore-based + Salesforce licences for Einstein features | Per connection + Agentstudio licence | Hourly/message packs + OCI AI credits | Per automation, annual. No separate AI licence. |

| Version control + governance | Native (Anypoint) | Native (with 2024 branch/merge) | Native (OIC) | Native: env-isolated, approval-gated, audit-log export |

Detailed Feature Comparison

AI Workflow Steps: Native vs Connected

MuleSoft’s AI workflow capabilities require your developer to configure the Einstein Trust Layer connector, set up the auth to Salesforce’s Models API, write DataWeave to transform your workflow data into the connector’s required format, and parse the LLM output back into your workflow’s data model. The capability works. The configuration requires a developer.

eZintegrations places an AI Workflow step on the same canvas as every other step. Your operations team selects the AI capability (LLM, Document Intelligence, Classification, or Vector Search), configures the prompt template using a visual editor that auto-populates available upstream data fields, defines the output schema, and maps the output to downstream steps. No DataWeave. No connector configuration. No developer.

Multi-Agent Orchestration: Governance vs Execution

MuleSoft’s Agent Fabric is primarily a governance and orchestration governance layer: it catalogues agents built across your Salesforce ecosystem and provides oversight via MCP and A2A protocols. It’s strong at governing agents you’ve already built. Building those agents still requires developer expertise.

Boomi’s Agentstudio is a capable agent design environment that reached General Availability in May 2025. The Agent Control Tower supports Boomi-native agents, Agentforce agents, and Amazon Bedrock agents. Agents built outside those three ecosystems are not supported.

Goldfinch AI of eZintegrations ships with 9 native out-of-the-box agent tools: Knowledge Base Vector Search, Document Intelligence, Data Analysis, Data Analytics with Charts/Graphs/Dashboards, Web Crawling, Watcher Tools, API Tool Call, Integration Workflow as Tool, and Integration Flow as MCP. Users can add more tools as self-service beyond these 9, without platform vendor involvement. Agents are not limited to a specific external ecosystem.

API Connectivity: Hardcoded Connectors vs API Catalog

MuleSoft’s Anypoint Exchange contains hardcoded connectors built against specific API versions of popular applications. When SAP releases a new S/4HANA API version, you wait for MuleSoft to release an updated connector. Custom connectors for unlisted applications require the connector SDK and developer expertise.

eZintegrations operates via an API catalog of 5,000+ pre-catalogued endpoints from enterprise applications. If an endpoint isn’t in the catalog, your team adds it as a self-service action by providing the API URL, authentication method, and schema. No code. No support ticket. No wait for a vendor connector update.

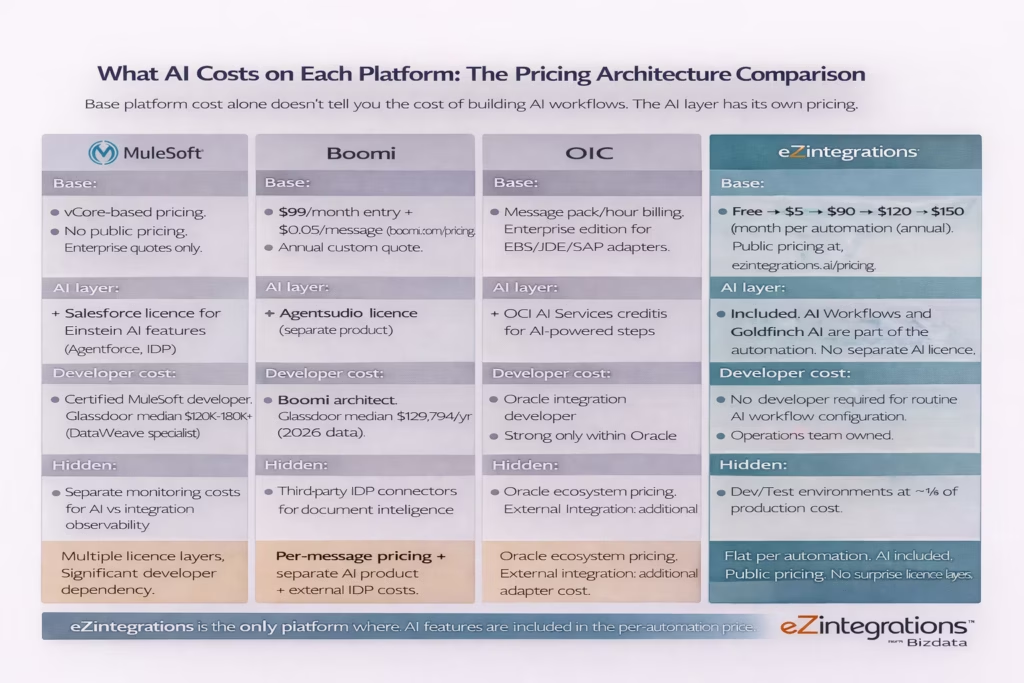

Pricing Architecture: What AI Costs on Each Model

The pricing architecture of AI capabilities in an iPaaS is as important as the technical architecture. On bolt-on platforms, AI features typically require additional licences layered on top of the base integration platform cost. On AI-native platforms, AI capabilities are included in the per-automation pricing.

MuleSoft’s AI pricing is a layer on top of an already opaque base price. The Anypoint Platform is priced per vCore (compute unit) with no public pricing. Using Einstein AI features requires a Salesforce licence that includes the relevant Einstein capabilities. Using MuleSoft Agent Fabric requires Anypoint licences. Building and maintaining DataWeave transformations for AI connectors requires a certified MuleSoft developer at a Glassdoor-reported median of $120,000 to $180,000 per year for DataWeave specialists.

Boomi’s base pricing is more transparent: $99/month entry point plus $0.05/message at boomi.com/pricing. But Agentstudio is a separate product with separate pricing. Boomi Intelligent Document Processing requires third-party connectors, adding external service cost. A Boomi developer costs a Glassdoor-reported median of $129,794 per year (2026 data).

Oracle OIC uses message pack and hourly billing, with Enterprise edition required for full adapter access to Oracle EBS, JD Edwards, Siebel, and SAP. OCI AI services for AI-powered steps add usage-based credits on top of the OIC base cost.

eZintegrations publishes its pricing at eZintegrations pricing. Free, $5, $90, $120, and $150 per automation per month (annual billing). AI Workflows and Goldfinch AI are included in the automation pricing. There is no separate AI service licence. Non-AI automations carry unlimited transactions with no per-message charges. Dev and Test environments cost approximately one-third of production pricing each.

Who Should Use AI-Native vs AI-Bolted-On iPaaS?

This is a direct answer to the evaluation question. Your choice between AI-native and AI-bolted-on iPaaS should be driven by three factors: the size of your AI automation roadmap, your available internal skills, and how quickly your team needs to build and modify AI workflows.

Choose a bolt-on platform (MuleSoft, Boomi, or Oracle OIC) if:

You have an existing investment in that platform and your AI roadmap is modest. If you’re primarily running traditional integration workflows and you need one or two AI-assisted features (suggested field mappings in Boomi, Einstein-assisted code generation in MuleSoft), the bolt-on AI additions to your existing platform may be sufficient without requiring a platform change.

You have a dedicated team of certified developers for the platform and your AI feature requirements fit within the platform’s supported ecosystems. MuleSoft’s Agent Fabric is a strong governance layer if you’re already building agents within the Salesforce/Agentforce ecosystem. Boomi’s Agentstudio works well if your agents primarily target Boomi-native, Agentforce, or Amazon Bedrock.

Your organisation is deeply embedded in the Oracle ecosystem and your primary AI use cases involve Oracle applications. OIC’s AI features are strongest within that context.

Choose an AI-native platform (eZintegrations) if:

Your automation roadmap is AI-first, not integration-first with AI added. If you’re planning to build intelligent document processing, agentic workflows, LLM-powered routing, or multi-agent orchestration as core capabilities rather than optional additions, the architecture of your platform matters enormously.

You want your operations team, not just developers, to build and maintain AI workflows. If the skills required to build AI steps require a certified developer, your operations team’s autonomy is limited regardless of what the platform’s no-code features look like for standard integration.

You need predictable costs as your AI workflow volume grows. Per-message pricing plus separate AI service licences create cost structures that are difficult to forecast as your automation footprint expands. Flat per-automation pricing with AI included makes budget forecasting straightforward.

You need to connect to systems outside a specific vendor ecosystem. eZintegrations’ API catalog covers 5,000+ enterprise endpoints with self-service onboarding for unlisted systems. Your AI workflows can reach any system your enterprise runs, not just the ones within the platform vendor’s connector library.

Why eZintegrations Is Built AI-Native

eZintegrations was designed from the ground up with AI as a first-class component of the workflow engine, not as an addition to an existing integration architecture. The AI Workflows layer, the Goldfinch AI multi-agent orchestration layer, and the 5,000+ endpoint API catalog are all native parts of the same platform.

When you add an AI Workflow step in eZintegrations, it uses the same visual canvas as every other step. The prompt template editor auto-populates available fields from upstream steps. The output schema is configured in the same field mapper you use for API responses. Error routing for an AI step uses the same conditional routing logic as any other step. Monitoring for AI step performance appears in the same execution log as API call logs and database query results.

Goldfinch AI ships with 9 native out-of-the-box agent tools: Knowledge Base Vector Search, Document Intelligence, Data Analysis, Data Analytics with Charts/Graphs/Dashboards, Web Crawling, Watcher Tools, API Tool Call, Integration Workflow as Tool, and Integration Flow as MCP. Users can add more tools as self-service beyond these 9, without requiring a vendor support ticket or a developer to write a custom connector.

The Automation Hub includes 1,000+ pre-built templates, many of which combine AI Workflow steps with API catalog actions. You can import an intelligent invoice processing template, configure it for your ERP and LLM preference, set your confidence thresholds, and have a production-grade AI workflow live in days, not months.

Bottom Line Verdict

If your automation roadmap is integration-first with AI added as a convenience feature, MuleSoft, Boomi, and Oracle OIC each offer capable platforms with AI capabilities that work within their ecosystems. If your roadmap is AI-first, the architecture of your platform determines whether you can execute it without a developer dependency for every AI step.

The bolt-on model is not a bad architecture. It’s the rational result of building excellent integration platforms and then adding AI as market demand emerged. MuleSoft’s Agent Fabric is a serious governance layer for Salesforce-ecosystem AI agents. Boomi’s Agentstudio, now GA, is a capable agent design environment. Oracle OIC’s AI features serve Oracle-centric organisations well.

But the bolt-on model has a structural ceiling. Every new AI workflow requires a developer to configure the connector, set up authentication, handle input/output transformation, and monitor a second system. That ceiling limits how quickly your organisation can scale AI automation and how much operational ownership your non-developer teams can exercise.

eZintegrations was built to remove that ceiling. AI Workflows, Goldfinch AI multi-agent orchestration, the 5,000+ endpoint API catalog, and unified monitoring are all native components of one platform. Your operations team builds AI workflows the same way they build any other workflow. Your costs are predictable. Your AI automation roadmap is not gated on developer availability.

The verdict: for teams where AI workflow automation is a core strategic capability rather than a convenience feature, AI-native architecture is the better foundation.

Book a free demo to see eZintegrations AI Workflows live

Frequently Asked Questions

1. Is eZintegrations better than MuleSoft for AI workflows

For organisations where AI workflow automation is a strategic priority and non developer ownership matters eZintegrations provides a more accessible architecture. AI steps are native to the workflow engine configured through the same no code canvas and included in per automation pricing without requiring a Salesforce ecosystem licence. MuleSoft remains a strong option for enterprises deeply embedded in the Salesforce ecosystem that rely on API led connectivity and are prepared to maintain certified MuleSoft developer teams.

2. How does Boomi compare to eZintegrations for AI automation

Boomi Agentstudio released generally available in May 2025 provides a capable environment for designing AI agents but it operates as a separate product layered on top of the AtomSphere integration platform rather than being embedded directly in the integration canvas. eZintegrations integrates AI workflow steps agent orchestration and API connectivity within a single unified canvas making it easier for operations teams to configure AI driven workflows without learning an additional product interface.

3. What is the difference between AI native iPaaS and AI bolted on iPaaS

An AI native iPaaS treats AI capabilities such as LLM calls agent orchestration and document intelligence as first class step types within the workflow engine configured in the same visual canvas as other integration steps. An AI bolted on iPaaS adds AI capabilities through connectors plugins or external services layered on top of an existing integration platform. This architectural difference affects implementation complexity operational ownership failure handling and overall cost.

4. Does Boomi Agentstudio support any AI agent outside the Boomi ecosystem

According to Boomi release documentation from May 2025 the Agent Control Tower governance layer supports Boomi native agents Agentforce agents and Amazon Bedrock agents. Agents created on other frameworks or platforms are not currently supported within that governance environment. In contrast eZintegrations Goldfinch AI allows agents to use any callable tool including its nine native tools as well as additional tools created by users through self service configuration without ecosystem restrictions.

5. Is Oracle OIC suitable for AI workflows outside the Oracle ecosystem

Oracle Integration Cloud provides strong AI capabilities when workflows remain within the Oracle ecosystem such as integrations between Oracle ERP HCM SCM and Oracle Digital Assistant. When workflows involve non Oracle systems AI functionality is typically accessed through OCI AI services connectors which reduces the contextual intelligence available for cross system automation. Enterprises operating mixed vendor environments such as SAP Salesforce Shopify and third party logistics providers often find broader API coverage platforms like eZintegrations more suitable.

6. How does eZintegrations pricing compare to MuleSoft for AI workflow automation

MuleSoft pricing is based on vCore capacity with no public price list and typically requires additional Salesforce licences for Einstein AI capabilities along with certified developer resources. eZintegrations publishes transparent pricing tiers including Free five dollars ninety dollars one hundred twenty dollars plus AI credits and one hundred fifty dollars plus AI credits per automation per month billed annually. AI workflows and Goldfinch AI capabilities are included within the automation pricing with no additional AI service licence required.

Conclusion

The iPaaS market is converging on AI. Every major platform now has an AI story. The strategic question for enterprise teams in 2026 is not which platform has AI features: they all do. The question is how those features are architected and what that architecture means for your team’s ability to build, own, and scale AI workflows without developer dependency.

MuleSoft, Boomi, and Oracle OIC are strong integration platforms with AI capabilities that work within their respective ecosystems. If your AI roadmap is modest and your team is already invested in one of these platforms, the bolt-on additions may serve you well.

If your organisation’s automation future is AI-first, the architectural foundation matters. AI as a first-class workflow step, not an external connector. Agent orchestration as a native tool set, not a separate product licence. API connectivity via an open catalog, not a hardcoded connector library. Unified monitoring for AI and integration steps, not two separate observability stacks. Per-automation pricing with AI included, not layered licence additions.

That is what AI-native iPaaS means in practice. And that is what eZintegrations delivers.

Book a free demo to see eZintegrations AI Workflows and Goldfinch AI in action

Explore AI Workflows for the LLM-powered pipeline layer. Visit eZintegrations pricing for full tier details.