Agentic AI vs AI Agents: What’s the Difference and Why It Matters for Enterprise

March 24, 2026An AI agent is an individual software component that perceives inputs and executes a defined task. Agentic AI is a system that deploys, orchestrates, and governs multiple agents working together toward a complex goal. Enterprises need both: agents for task execution, agentic AI for end-to-end process automation that reasons, plans, and adapts across systems.

TL;DR

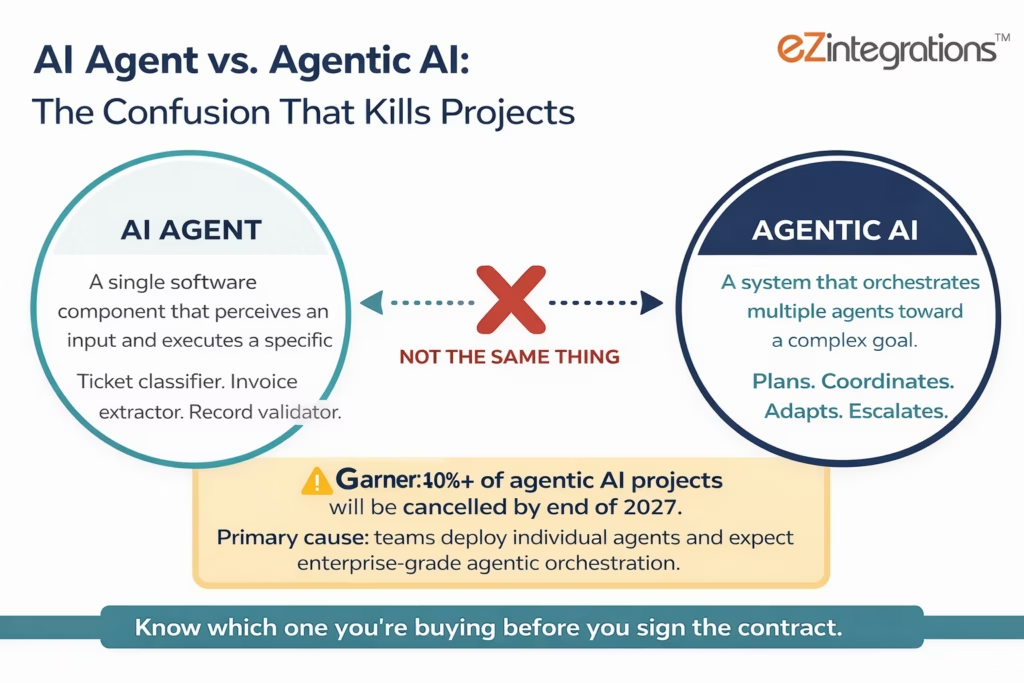

Agentic AI vs AI agents: An AI agent is a single-task executor: it perceives an input, applies logic, and completes a specific, bounded action. It does one job well. Agentic AI is a system: it deploys and orchestrates multiple agents, maintains shared context, reasons about multi-step goals, and adapts when exceptions occur. The confusion between the two terms is costly. Gartner identifies “agentwashing,” the rebranding of basic agents or chatbots as agentic AI, as a primary cause of the 40%+ project failure rate predicted by end of 2027. Enterprises need both: agents for task execution, agentic AI for end-to-end process orchestration that no single agent can handle alone. eZintegrations Goldfinch AI delivers both layers: 9 native agent tools for task execution and a multi-agent orchestration platform with shared governance, Human Approval Gates, and 5,000+ API endpoint coverage.

The Terminology Problem That Is Costing Enterprises Money

“AI agent” and “agentic AI” are not the same thing, and the confusion is not academic. When a procurement team buys a platform marketed as “agentic AI” and receives a collection of single-task bots with no orchestration layer, the result is exactly what Gartner describes: a cancelled project, a wasted budget, and a 12-month delay to the next attempt.

Gartner predicts more than 40% of agentic AI projects will be cancelled by end of 2027. The stated causes: escalating costs, unclear business value, and inadequate risk controls. The unstated cause in many of those failures is simpler: the team thought they were buying an agentic system and received individual task agents that couldn’t coordinate, share context, or adapt to exceptions.

This guide gives you the precise definitions, the architectural distinction, and the decision framework you need to evaluate any AI automation platform accurately in 2026. It covers what an AI agent actually is, what agentic AI actually is, where each belongs in your enterprise architecture, and when you need both.

The distinction is not a matter of technical pedantry. It is the difference between a team that gets 40% of their invoice automation handled and a team that gets 92% handled, automatically, with the remaining 8% routed to a human reviewer with full context pre-filled.

What Is an AI Agent? Definition and Core Characteristics

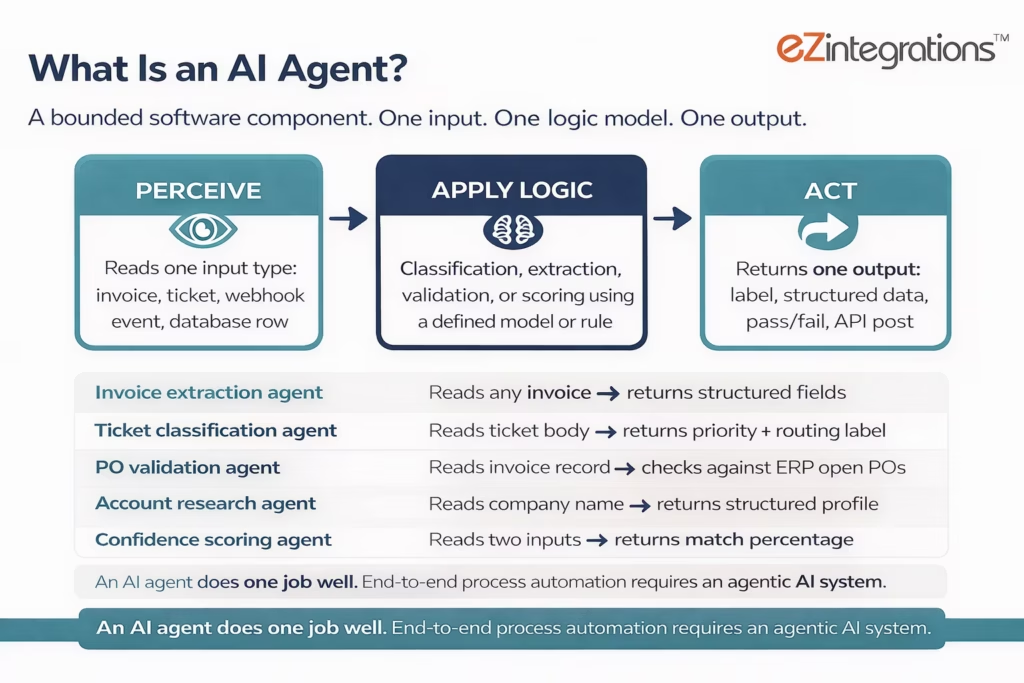

An AI agent is a software component that perceives an input from its environment, applies logic or a model to interpret it, and executes a specific, bounded action toward a defined goal.

That definition is deliberately narrow. An AI agent is not a system. It is a component. It does one job. It does that job well. And it needs an orchestration layer to do anything beyond that one job.

ISACA’s 2025 analysis draws the key distinction cleanly: “Traditional chatbots are agents because they respond to inputs, but they lack agency because they don’t pursue independent goals.” A traditional agent operates within predefined parameters. It responds to human direction. It does not initiate.

The four characteristics that define an AI agent:

Perception. The agent reads an input: an incoming invoice, a support ticket JSON payload, a webhook event from a monitoring system, a row in a database that matches a watch condition. The input triggers the agent.

Bounded logic. The agent applies a defined decision model to the input: a classification rule, an LLM-based extraction prompt, a validation check against a reference data set, a routing condition. The logic is scoped. It handles this type of input with this type of logic.

Single action. The agent produces one output: a classification label, an extracted data structure, a validation pass/fail signal, a record written to a system of record. One output. One action.

Defined scope. The agent operates within explicit boundaries set at configuration time. It does not extend beyond those boundaries. It does not re-plan when the input is unexpected. It either handles the input or flags it as an exception for a human or an orchestration layer to resolve.

Practical enterprise examples of AI agents:

- A document extraction agent that reads any invoice format and returns structured field data (vendor, amount, line items, due date).

- A ticket classification agent that reads a support ticket body and returns a priority score and routing label.

- An account research agent that accepts a company name and returns a structured profile from web sources.

- A PO validation agent that accepts an extracted invoice record and checks it against open POs in an ERP via API.

- A confidence scoring agent that accepts two data inputs and returns a match confidence percentage.

Each of these is a precise, bounded component. None of them, alone, processes an invoice from arrival to ERP posting. None of them, alone, resolves a support ticket from submission to closure. That end-to-end outcome requires something more: an agentic AI system.

What Is Agentic AI? Definition and Core Characteristics

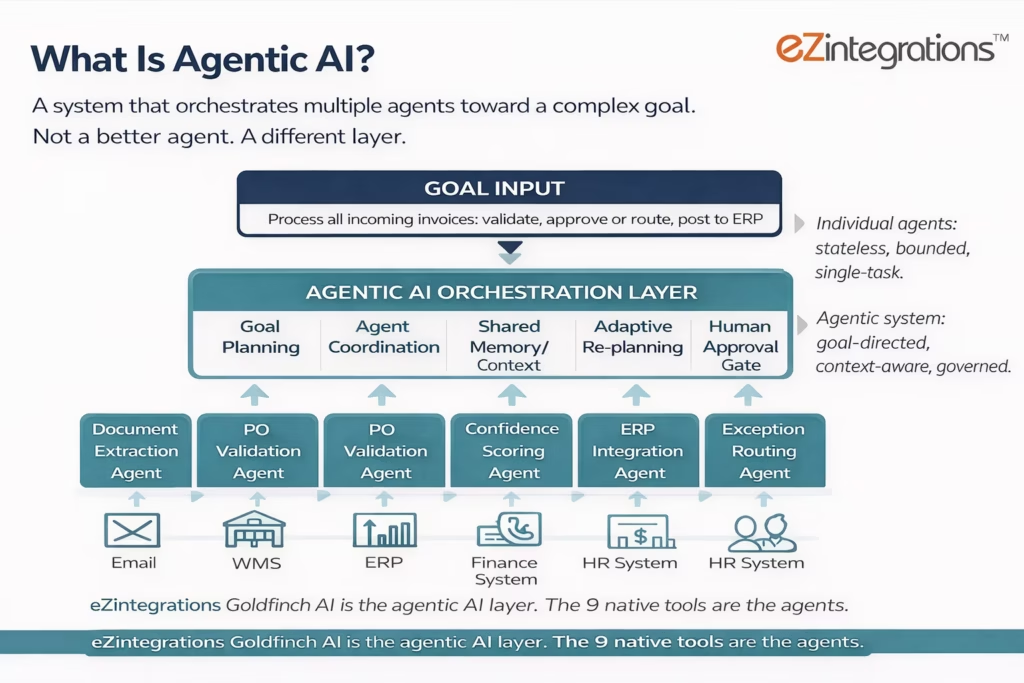

Agentic AI is a system that deploys, orchestrates, and governs multiple AI agents working together toward a complex, multi-step goal, maintaining shared context, managing tool calls, and adapting when agents encounter exceptions.

The key word is “system.” Agentic AI is not an upgraded agent. It is the environment in which agents operate. It provides the goal, the plan, the coordination logic, the shared memory, the governance controls, and the escalation paths that individual agents cannot provide for themselves.

MIT Sloan’s research defines the capability precisely: AI agents within an agentic system “can execute multi-step plans, use external tools, and interact with digital environments to function as powerful components within larger workflows.” The agents are the components. The agentic system is what makes those components produce end-to-end outcomes.

The five characteristics that define agentic AI:

Goal direction. The system receives a high-level goal: “Process all incoming invoices, validate against open POs, post approved invoices to the ERP, and route exceptions to the AP team.” It does not receive step-by-step instructions. It determines the steps.

Multi-agent orchestration. The system deploys a sequence or network of specialised agents to accomplish the goal. A document extraction agent handles the document. A validation agent handles the PO check. A confidence scoring agent determines whether to auto-approve or route for review. An integration agent handles the ERP posting. The orchestrator manages the handoffs.

Persistent context and memory. The system maintains shared context across all agent actions in a given workflow run. Agent 3 knows what Agent 1 found. Agent 5 knows that Agent 2 flagged a confidence issue. Individual agents are stateless. The agentic system is not.

Adaptive planning. When an agent produces an unexpected output, or encounters an exception outside its configuration scope, the agentic system evaluates the situation, adjusts the plan, routes to an alternative agent or path, or escalates to a Human Approval Gate. Individual agents cannot re-plan. The agentic system can.

Governance and auditability. The system logs every agent action, every tool call, every decision, and every escalation in a full audit trail. Confidence thresholds are configurable. Human Approval Gates are built in. Environment separation (Dev/Test/Production) is enforced. Individual agents have none of this by default.

A February 2026 peer-reviewed taxonomy published in Information Fusion (ScienceDirect) describes this progression precisely: “modular agents to orchestrated, memory-persistent agentic AI,” with the key distinguishing factor being the orchestration layer and persistent memory that individual agents lack.

Agentic AI vs. AI Agents: The Six-Dimension Comparison

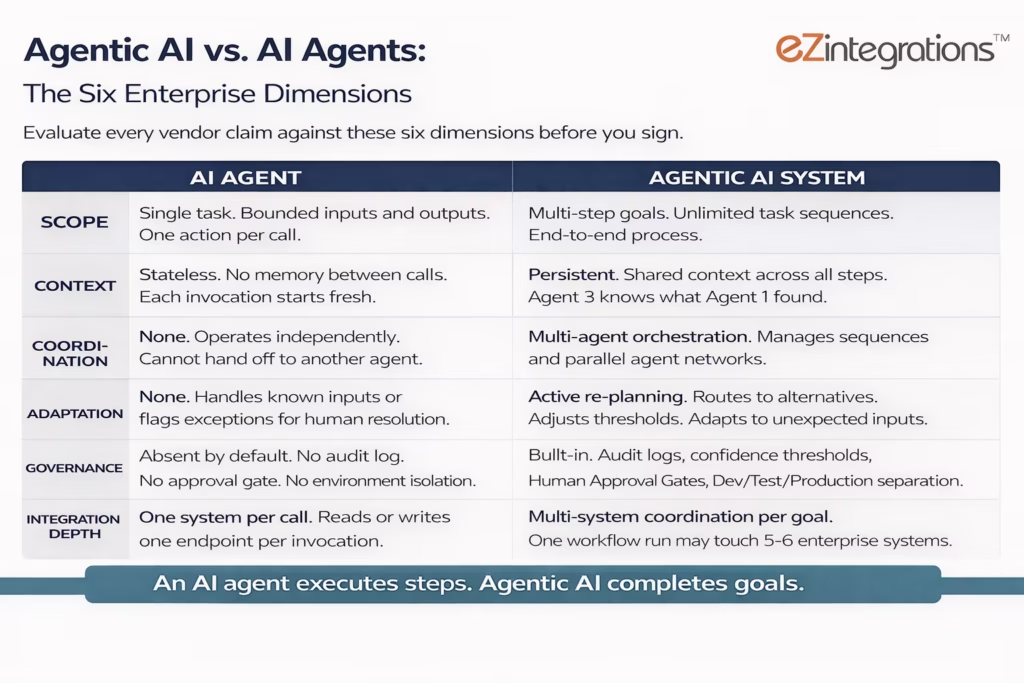

The six dimensions that separate agentic AI from individual AI agents map directly to enterprise procurement criteria. Evaluate any vendor claim against these six dimensions before signing a contract.

| Dimension | AI Agent | Agentic AI System |

|---|---|---|

| Scope | Single task, bounded inputs and outputs | Multi-step goals, unbounded task sequences |

| Context | Stateless: no memory between calls | Persistent: shared context across all steps |

| Coordination | None: operates independently | Multi-agent: orchestrates sequences and networks of agents |

| Adaptation | None: fails or flags on unexpected inputs | Active: re-plans, routes to alternatives, adjusts thresholds |

| Governance | Absent by default | Built-in: audit logs, approval gates, confidence thresholds |

| Integration depth | Reads from / writes to one system per call | Coordinates reads and writes across multiple systems per goal |

The practical implication of each dimension:

Scope. A ticket classification agent classifies a ticket. An agentic AI system classifies the ticket, retrieves the relevant KB article, drafts a resolution response, posts the draft back to the ticketing system, monitors for a customer reply, and escalates if no resolution is confirmed within 24 hours. The agent does one step. The agentic system handles the entire lifecycle.

Context. A PO validation agent checks one invoice against one PO. It does not know whether the vendor has three other open invoices under dispute. It does not know whether the AP team flagged this vendor last week. The agentic system maintains a shared context that includes everything the prior agents in this workflow run have seen and done.

Coordination. An invoice extraction agent returns a structured JSON object and stops. The agentic system passes that JSON to the validation agent, passes the validation result to the confidence scoring agent, passes the confidence score to the routing decision logic, and either posts to ERP or routes to a human reviewer with the full context pre-filled.

Adaptation. A validation agent that receives a partial match (the vendor name on the invoice differs slightly from the vendor master record) has two options: pass or fail. An agentic system has a third option: trigger a web crawling agent to verify the vendor’s legal name, compare the result, update the confidence score, and proceed with a revised routing decision.

Governance. A single agent has no built-in audit log. It either completed the task or raised an error. An agentic system logs every tool call, every decision threshold evaluation, every escalation, and every human approval action, in a format that satisfies enterprise compliance, audit, and security review requirements.

Integration depth. A single API tool call agent reads from one endpoint per invocation. An agentic workflow run for a single invoice may touch five or six enterprise systems: the email inbox (document retrieval), the vendor master (validation), the PO system (matching), the ERP (posting), the AP team’s notification system (exception routing), and the audit log store (compliance). No individual agent does all of this. The agentic system does.

The Agent Washing Problem: Why the Distinction Matters for Procurement

“Agentwashing” is Gartner’s term for the rebranding of chatbots, RPA tools, and AI assistants as AI agents or agentic AI without substantive agentic capability. It is widespread in 2026, and it is a direct cause of failed projects.

Gartner’s June 2025 analysis is specific: “Many vendors are contributing to the hype by engaging in ‘agent washing’: the rebranding of existing products, such as AI assistants, robotic process automation (RPA) and chatbots, without substantial agentic capabilities.”

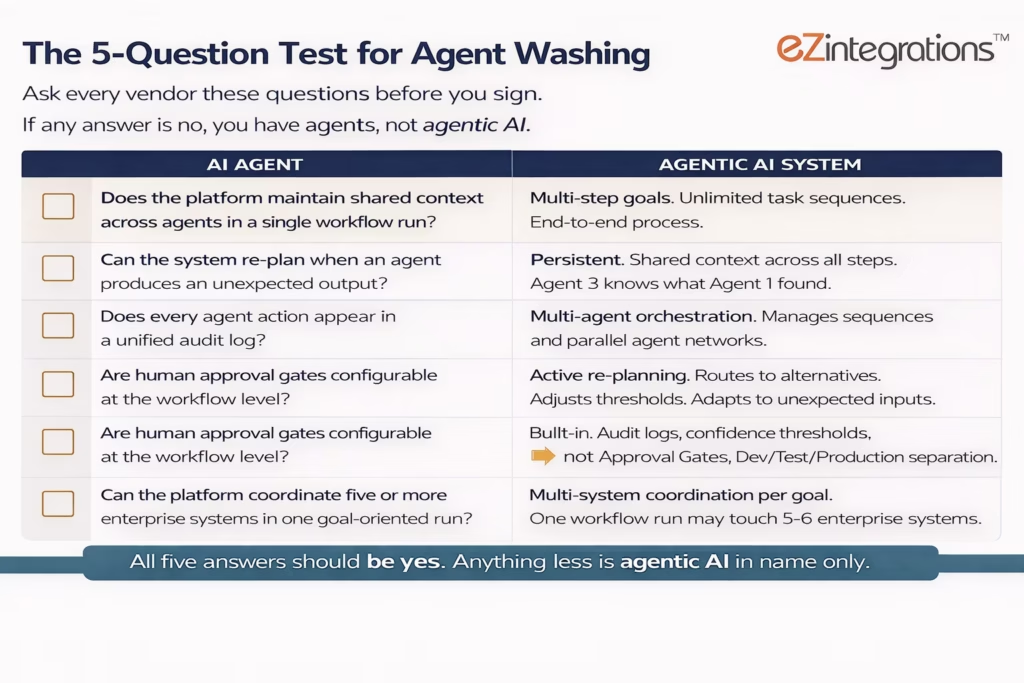

The procurement test is simple. Ask these five questions before signing:

Question 1: Can the platform maintain shared context across agents in a single workflow run? If each agent call is stateless, with no memory of prior agent outputs in the same run, the platform has no orchestration layer. You have a collection of independent components, not an agentic system.

Question 2: Can the platform re-plan when an agent produces an unexpected output? Ask the vendor to demonstrate what happens when the confidence score falls below threshold on a document extraction. Does the system route to an alternative path, trigger a secondary verification agent, or escalate to a Human Approval Gate? If the answer is “it flags an error and stops,” you have an agent, not agentic AI.

Question 3: Does every agent action appear in a unified audit log? Enterprise governance requires that every tool call, every decision, and every escalation is logged in a format that survives a compliance or security audit. Point solutions with individual agent logs that don’t aggregate do not meet this requirement.

Question 4: Are human approval gates configurable at the workflow level? The ability to set confidence thresholds and route exceptions to a named human reviewer with context pre-filled is a core agentic AI capability. If human intervention requires leaving the platform or using a separate notification tool, the platform is not production-ready for enterprise.

Question 5: Can the platform coordinate agent outputs across five or more enterprise systems in a single goal-oriented run? The defining capability of agentic AI is multi-system coordination within a single goal-directed workflow. A platform that calls one external API per agent, but cannot coordinate a six-system workflow run without manual configuration between each step, is providing individual agents, not agentic AI.

The McKinsey spring 2025 survey found that 35% of respondents had adopted AI agents by 2023, with 44% more planning to deploy soon. 96% of enterprise IT leaders plan to expand AI agent use in the next 12 months. The scale of deployment pressure makes agent washing risk high: vendors are incentivised to meet the market demand with whatever they have, whether or not it constitutes genuine agentic capability.

When Your Workflow Needs an AI Agent vs. When It Needs Agentic AI

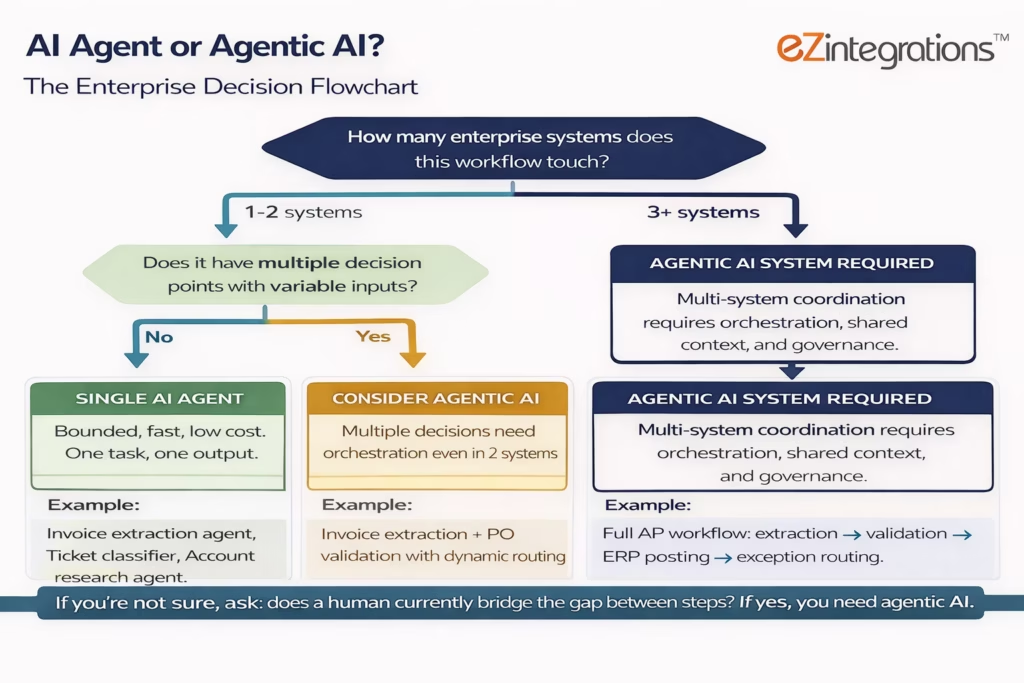

The right choice between an AI agent and an agentic AI system depends on three process characteristics: how many systems are involved, how variable the inputs are, and whether the workflow spans multiple decision points.

Use this decision guide:

| Process characteristic | Use AI Agents | Use Agentic AI |

|---|---|---|

| Systems involved | 1-2 systems | 3+ systems |

| Workflow steps | 1-3 bounded steps | 4+ steps with dependencies |

| Input variability | Structured, predictable format | Variable format, unstructured content |

| Decision points | 0-1 decision gates | 2+ decision gates or dynamic routing |

| Exception frequency | Rare, handled externally | Regular, must be handled within the workflow |

| Governance requirements | Basic logging | Full audit trail, confidence thresholds, approval gates |

| Process outcome | Single output returned | End-to-end business outcome completed |

Deploy a single AI agent when:

Your process involves one well-defined task with predictable, structured inputs. You want to extract data from a standard form, classify text against a defined taxonomy, validate a field against a reference record, or retrieve structured information from a known source. The task is repeatable, the inputs are consistent, and the output is one data object or one action. A single agent is faster to configure, cheaper to run, and easier to audit.

Deploy an agentic AI system when:

Your process involves multiple steps across multiple systems, with variable inputs, multiple decision points, and a requirement to complete a business outcome without a human touching every step. Processing an invoice from arrival to ERP posting. Qualifying a lead from enrichment to CRM update to sales rep notification. Onboarding a new employee across IT, HR, and Facilities systems. Triaging and resolving a support ticket from submission to customer confirmation. These are agentic AI workflows. No individual agent completes them. The orchestration layer is what makes them work.

The most common mistake: deploying individual agents for each step of a multi-step workflow, without an orchestration layer, and expecting the agents to coordinate themselves. They won’t. You’ll end up with four agents, four separate configurations, four separate audit logs, and a human manually passing outputs between them. That is not automation. That is a more complicated version of the manual process you were trying to replace.

The Architecture Layer: How AI Agents and Agentic AI Work Together

AI agents and agentic AI are not competing choices. They are two layers of the same architecture. The confusion arises because vendors market each layer separately, as if deploying individual agents is equivalent to deploying an agentic system.

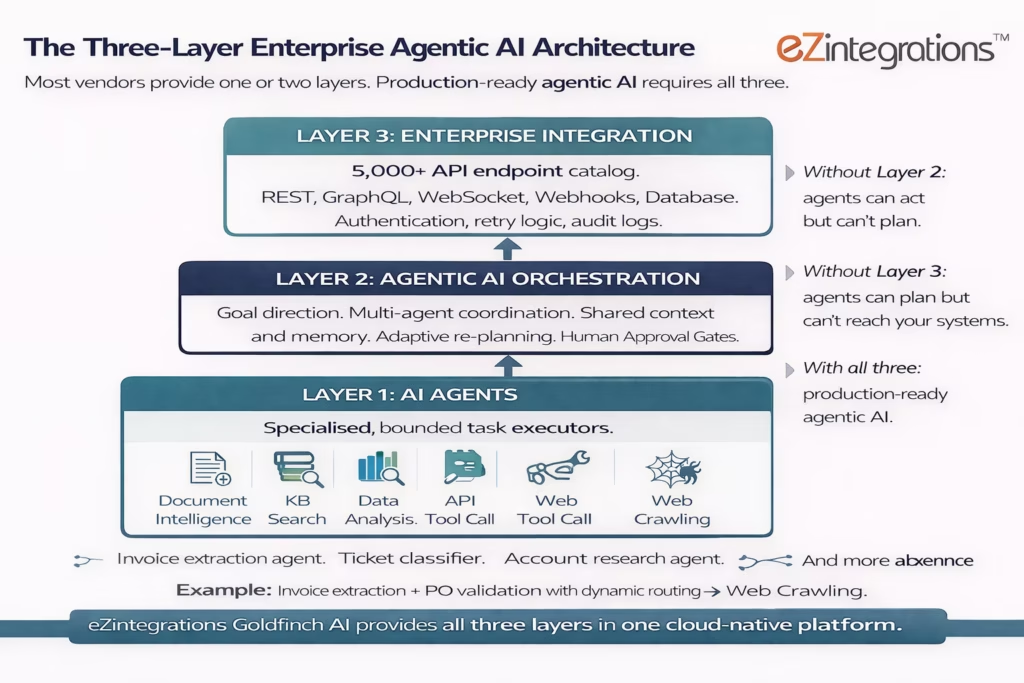

The correct architecture has three layers:

Layer 1: Individual AI Agents (task execution). Each agent is a specialised, bounded component optimised for one type of task. A document intelligence agent for unstructured document extraction. A knowledge base search agent for policy retrieval. A data analysis agent for structured data validation. A web crawling agent for external research. An API tool call agent for system reads and writes. These are the workers.

Layer 2: Agentic AI Orchestration (goal direction and coordination). The orchestration layer receives the goal, determines the plan, sequences the agent calls, maintains shared context across the run, evaluates intermediate results, adapts the plan when exceptions occur, and routes to Human Approval Gates when confidence thresholds require human judgment. This is the manager.

Layer 3: Enterprise Integration (system connectivity). Every agent call that reads from or writes to an enterprise system requires a reliable, governed integration layer: API catalog coverage, authentication management, error handling, retry logic, and audit logging at the integration level. Without this layer, the agents and the orchestrator are disconnected from the enterprise systems where the actual work lives. This is the infrastructure.

Platforms that provide Layer 2 without Layer 3 produce agents that can plan but can’t act on enterprise systems. Platforms that provide Layer 3 without Layer 2 produce automations that can act but can’t plan. Platforms that provide Layers 1, 2, and 3 together are production-ready agentic AI.

Summary comparison across nine capabilities:

| Capability | Single AI Agents | Multi-Agent Platform (without integration) | Agentic AI with Integration (eZintegrations Goldfinch AI) |

|---|---|---|---|

| Individual task execution | Yes | Yes | Yes, via 9 native tools |

| Multi-step goal orchestration | No | Yes | Yes |

| Shared context across agents | No | Yes | Yes |

| Adaptive re-planning on exceptions | No | Partial | Yes |

| Human Approval Gate (configurable) | No | Partial | Yes |

| Full unified audit log | No | Partial | Yes |

| 5,000+ enterprise API endpoint coverage | No | No | Yes |

| No-code configuration (non-developer) | Varies | Varies | Yes |

| Dev/Test/Production environment isolation | No | Partial | Yes |

| MCP protocol (expose workflows to external agents) | No | No | Yes |

| Pre-built templates in Automation Hub | No | Varies | 1,000+ |

The critical gap in most “multi-agent platform without integration” offerings is API coverage. A platform that orchestrates agents well but covers only 50 to 200 pre-built connectors forces your team to write custom integration code for every enterprise system not on the connector list. In a typical enterprise with 50 to 200 deployed SaaS applications, that gap becomes the bottleneck that prevents production deployment.

Pricing Comparison Section

Pricing for AI agent and agentic AI platforms varies significantly based on architecture scope and integration coverage. Understanding the cost structure before committing to a platform prevents the budget overruns that contribute to Gartner’s 40% project cancellation rate.

Individual AI agent tools and frameworks (open source or API-based): Low initial cost, typically $0 to $500/month for API access to LLM models. However, total cost of ownership climbs when you add the integration development cost, the orchestration layer build cost, the governance tooling cost, and the ongoing maintenance cost. MIT Sloan’s 2025 research found that 80% of the work in enterprise AI deployment is data engineering and system integration, not AI modelling. In a team of developers at $120K+ annual salary, “free” open-source agents are rarely free for long.

Standalone agent platform tools (single-purpose, no enterprise integration layer): Typically $50 to $500/month for SaaS point solutions. Low per-tool cost, high total cost when multiplied by the number of use cases requiring separate tools, separate configurations, and separate governance oversight. No unified audit log. No shared context across tools.

eZintegrations Goldfinch AI: Transparent pricing starting from $120/month per automation for LLM/AI automations (plus AI credits), with $150/month for the highest tier, on annual billing. No platform fee. No connector fees. Dev and Test environments at approximately one-third of production cost each. The full eZintegrations pricing page shows all tiers. Every Goldfinch AI automation includes the full orchestration layer, 9 native tools, 5,000+ API endpoint access, Human Approval Gates, unified audit logging, and Dev/Test/Production environment isolation.

The TCO calculation favours integrated agentic AI platforms for any organisation deploying more than two to three automation use cases. The orchestration layer, governance tooling, and integration coverage are built-in costs at $120 to $150/month per automation, not additional development costs at $120K+ per developer-year.

Who Should Deploy Each: Decision Guide for Technology Leaders

Both AI agents and agentic AI have a legitimate place in an enterprise architecture. The question is not which is “better” in the abstract: it is which your specific workflow requires.

Choose individual AI agents when:

Your use case is a single, well-defined task that touches one or two systems, has structured and predictable inputs, requires no coordination with other agents, and has simple exception handling. Examples: a standalone document extraction agent for a single document type, a text classification agent for a single channel, a data validation agent for a single field type. These are fast to configure, cost-efficient for high-volume single tasks, and easy to audit in isolation.

Choose an agentic AI system when:

Your use case involves multiple steps, multiple systems, variable inputs, multiple decision points, or a requirement to complete a business outcome end-to-end. Any process where a human currently acts as the bridge between two or more systems is a candidate for agentic AI. AP invoice processing, IT ticket resolution, HR onboarding orchestration, sales lead qualification, and supply chain PO exception handling all qualify. These processes require orchestration, shared context, adaptive planning, and governance that individual agents cannot provide.

Choose eZintegrations Goldfinch AI when:

You need both layers in one governed platform, without writing integration code for every enterprise system. Your team includes operations managers and domain experts who need to configure agents without developer support. You have more than three automation use cases in scope and need a consistent governance framework across all of them. You need MCP protocol exposure to connect Goldfinch AI agents to external AI systems like Claude or ChatGPT. And you want transparent pricing with no per-connector fees for a 5,000+ endpoint API catalog.

Bottom Line: Which Capability Does Your Enterprise Actually Need?

The bottom line is this: you almost certainly need agentic AI, and you probably already know it. The use cases that keep coming up in enterprise AI roadmaps (AP automation, IT support resolution, HR orchestration, sales intelligence, customer service resolution) are all multi-step, multi-system, multi-decision-point processes. They are agentic AI use cases, not single-agent use cases.

The question is not whether to deploy agentic AI. It is whether to deploy it on a platform that provides all three architecture layers, or to assemble them manually from individual components and discover the gap when the first production deployment encounters an exception it cannot handle.

Gartner’s data is clear: 40% of enterprise applications will include task-specific AI agents by end of 2026. The transition is not optional for technology leaders who want to remain competitive. The choice is in how you build: on a governed, integration-complete agentic AI platform, or on a collection of individual components that don’t coordinate.

eZintegrations Goldfinch AI is the agentic AI layer for enterprise teams that need production results in weeks, not quarters. 9 native tools covering every agent capability required for enterprise workflows. 5,000+ API endpoint coverage for any enterprise system without connector fees. No-code configuration for operations teams. Full governance: audit logs, approval gates, environment isolation. And a 1,000+ template Automation Hub that means you start with a working configuration, not a blank canvas.

Book a free demo to see Goldfinch AI handle a real end-to-end agentic workflow on your specific use case and enterprise system stack.

Frequently Asked Questions

1. What is the difference between an AI agent and agentic AI

An AI agent is a single task software component that receives an input applies bounded logic and performs one action. Agentic AI is a system architecture that orchestrates multiple agents toward a complex multi step objective with shared context adaptive planning governance controls and human approval gates. Individual agents perform discrete actions while the agentic system coordinates them to produce end to end business outcomes.

2. Is agentic AI just a collection of AI agents

No and the distinction is important for enterprise procurement. A collection of independent agents without an orchestration layer lacks shared context adaptive re planning unified governance and coordinated action across systems. Agentic AI introduces the orchestration layer that manages agent coordination context sharing and execution order. Without this layer organizations simply have isolated agents that cannot collaborate to complete multi step business workflows.

3. Can you use AI agents without agentic AI

Yes for bounded single task scenarios. Examples include invoice data extraction ticket classification or data validation where the agent performs a single action and returns a result. However these agents stop after completing their task. Workflows that involve multiple steps multiple systems or multiple decision points require an agentic AI orchestration layer to coordinate the sequence of actions and manage dependencies.

4. What is agent washing and how do I identify it

Agent washing is a term used by analysts including Gartner to describe the rebranding of existing chatbots RPA tools or AI assistants as AI agents or agentic AI without delivering genuine agentic capabilities. A practical evaluation test includes five questions. Does the platform maintain shared context across agents during execution. Can it re plan when an agent produces unexpected output. Does every action appear in a unified audit log. Are human approval gates configurable at the workflow level. Can the platform coordinate multiple enterprise systems in a single goal directed run. A genuine agentic AI platform answers yes to all five.

5. How does eZintegrations Goldfinch AI compare to standalone AI agent tools for enterprise workflows

Standalone AI agent tools typically focus on the first architectural layer which is individual task execution. They do not provide orchestration for multi step goal management shared context adaptive re planning or human approval gates and they generally lack deep enterprise system integration. eZintegrations Goldfinch AI provides task execution orchestration and enterprise integration coverage within a single platform including more than five thousand API endpoints support for REST GraphQL WebSocket Webhooks and database systems and over one thousand pre built templates.

6. What are the best agentic AI use cases for enterprise in 2026

High return enterprise use cases for agentic AI include accounts payable invoice processing IT support ticket triage and resolution sales account intelligence and lead qualification HR onboarding and offboarding automation customer service resolution with escalation prediction and supply chain supplier risk monitoring with purchase order exception handling. Each scenario involves multiple systems multiple steps and several decision points making them ideal for agentic AI systems that can coordinate actions and complete business outcomes without requiring manual intervention at every stage.

Conclusion

AI agents and agentic AI are two layers of the same architecture, not two competing options. You need agents for task execution and you need agentic AI for end-to-end process orchestration. Confusing the two, or buying one when you need both, is the most common cause of the 40%+ project failure rate Gartner projects through 2027.

The practical framework is direct: if your workflow touches more than two systems, has more than two decision points, or requires a business outcome completed without a human bridging the steps, you need agentic AI. If your workflow is a single, bounded task with predictable inputs, a single agent is sufficient.

For enterprise teams that need production-ready agentic AI, with all three architecture layers, transparent pricing, no-code configuration, and 5,000+ API endpoint coverage, eZintegrations Goldfinch AI is built for exactly that. 9 native tools. 1,000+ templates. Full governance. Available today.

Book a free demo to see the difference between a single agent call and a full agentic AI workflow on your specific use case.